Last Thursday, UltraDNS customers experienced a bit of déjà vu as the DNS service experienced another outage, the second since a massive DDoS attack on April 30, 2014. Neustar, the company behind UltraDNS, reported different root causes over time -- first pinning the incident on a DDoS attack, and then later describing the issue as a server failure. In this post we’ll take a closer look at what happened during the most recent outage on October 15th, 2015. We’ll also investigate what might have caused it, based on the data from the many services affected by the DNS outage.

Feel free to follow along with the interactive data set at https://bogxsmcxc.share.thousandeyes.com and explore the various data layers.

The October 15th Outage

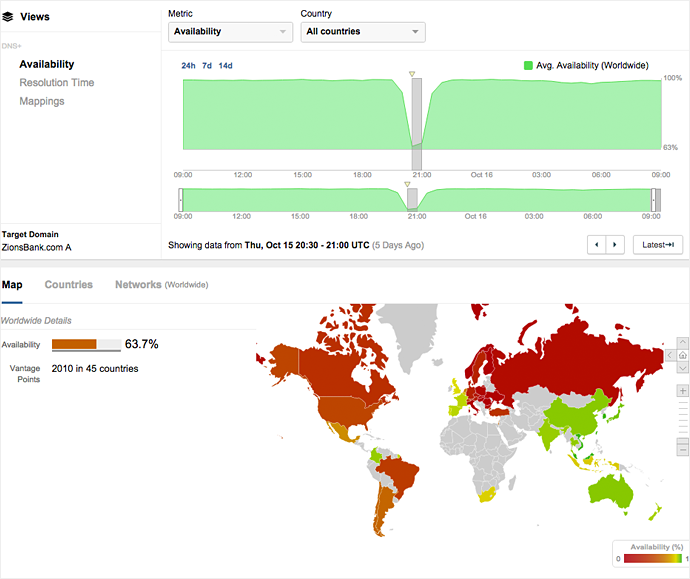

On October 15th from 20:25 UTC-22:45 UTC (1:25pm-3:45 PDT), many of the UltraDNS name servers were unreachable or slow to reach. Because UltraDNS is a major DNS service provider, the resulting DNS outage affected dozens of prominent websites and applications, including Netflix, Expedia and major banking sites. Users in the Central and Eastern U.S. (from Denver to the east) and Europe were affected.

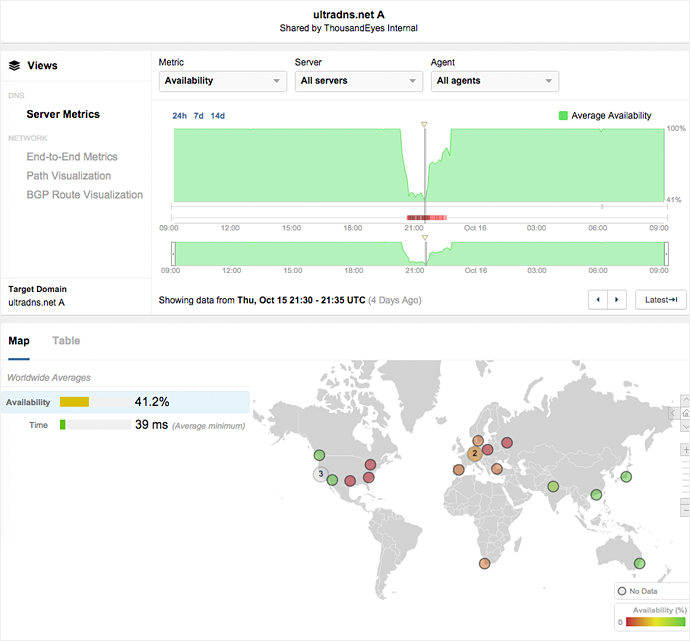

At the height of the two-hour outage, DNS server availability dropped to below 50%, meaning that users could not resolve the IP addresses for domains using UltraDNS. As can be seen from Figure 1, UltraDNS was generally unavailable for the first 90 minutes in both the U.S. and Europe, leading to the 40% availability figure. The unavailable DNS servers returned SERVFAIL during the course of the outage, suggesting they were overloaded or unable to process requests. From 21:40, and for the following 60 minutes, availability returned to Europe, while the DNS service in the Eastern U.S. remained unavailable.

with impacts in the Eastern U.S. and Europe.

At the same time, DNS resolution time jumped from 25ms to more than 40ms and packet loss spiked to levels around 10%. Figure 2 shows how increased packet loss broadly matches the same two-hour period and geographic footprint as the drop in DNS availability. Correlation among packet loss, latency and service availability suggests that network layer issues were at play.

Was It a DDoS or a Server Failure?

There have so far been conflicting reports as to the cause of the outage.

During the outage at 1:44PM PST, Neustar’s support department sent emails to customers stating, "the Neustar UltraDNS service is currently experiencing DDoS traffic in the U.S. East Region. The Security Operations Team is currently working on mitigating attack traffic and further updates will be provided as soon as possible." An hour later, while the outage was still in progress, the support team stated that there was a “major spike in traffic across several regions” and that “the Security Operations Team is conducting routing changes and working to reroute traffic.”

However, a Neustar spokesperson later placed the blame on “an internal issue in a server on the East Coast and…not the result of an attack by hackers.” And the UltraDNS twitter account stated that “the cause of the issue was a config mgmt error. We r putting safeguards in place 2 ensure it doesn't happen again.”

So what actually caused the outage?

Our Observations

During the outage, we were monitoring the UltraDNS service as well as many of UltraDNS’ customers. Some of what we observed matched Neustar’s root cause analysis, though much of it did not.

Impacts in Europe and Eastern U.S.

We saw widespread outages in the Eastern and Central U.S. (from Denver to the east) for the entirety of the outage. We also observed major issues in Central and Eastern Europe for the first hour (from 20:25-21:40 UTC).

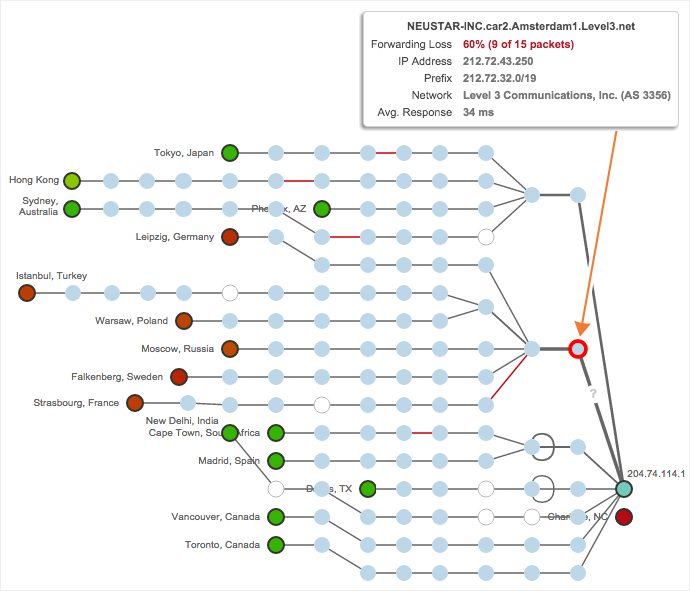

Neustar DNS is an Anycast service, meaning that the same destination IP address is being served by many servers. In Neustar’s case, they have 30 global data centers from which they host their DNS resolution. In addition, each UltraDNS-hosted domain appears to have six name servers that will respond to DNS queries. Neustar allows for per-state, per-country and per-region DNS resolution which is likely why we saw such discrete outage areas. Figure 3 shows certain affected data centers. Note that because this is an Anycast service, the Path Visualization shows a single IP address destination on the right; this actually represents many data centers, which can be identified by the preceding hops.

connect to the Amsterdam Neustar data center via Level 3.

We observed issues in a number of these data centers, most frequently in Amsterdam, Ashburn, Atlanta, Charlotte, Chicago, Dallas, Denver, Frankfurt, Miami, Phoenix, San Jose, Seattle and Toronto. Unaffected data centers included Los Angeles, Madrid, Mumbai, Paris, Singapore, Sydney and Tokyo.

This widespread outage across continents does not square with the description of a server error first posited by the Neustar spokesperson.

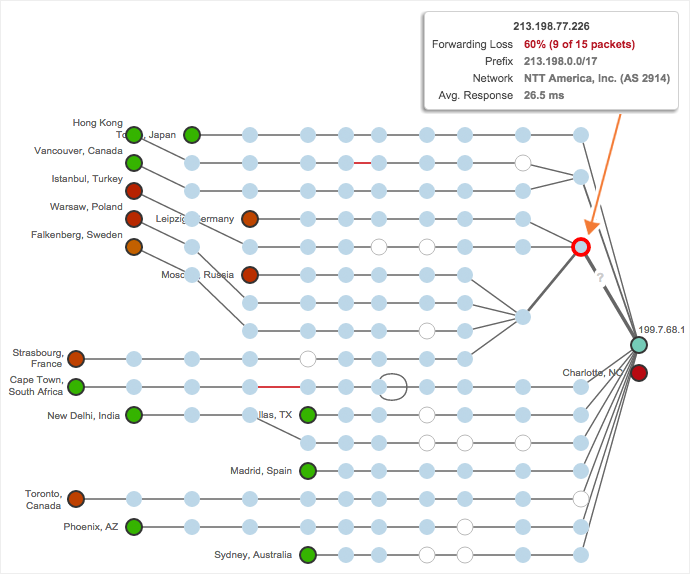

Impacts in Upstream Links with Level 3 and NTT

Neustar has three primary ISPs — Level 3, NTT America and Telia — that provide connectivity to their Autonomous System (AS 12008). We only observed issues in the Level 3 and NTT America networks. In particular, Level 3 in Denver and Amsterdam, and NTT America in Frankfurt had the most persistent loss. Figure 4 shows an outage within the NTT America Frankfurt POP.

this time with NTT America as the upstream ISP.

Our Take on the Outage: Configuration Error Most Likely

Overall, the data is not consistent with a DDoS attack. As we outlined above, the geographic specificity of the outage in Europe and the Eastern U.S. would be difficult for an attacker to pull off. To do so, an attacker would need to be able to only originate traffic from those regions.

The data is also not consistent with a server or device failure in the Eastern U.S., as was reported in the statement to the New York Times. With repeated outages in major European POPs, it’s hard to imagine how an Anycast service would have been so severely impacted from an isolated device failure.

More likely, given that it was a partial outage that affected UltraDNS data centers in specific regions and a selection of upstream ISPs, this was a network or application configuration error. An error could have caused an application (DNS resolution) problem that only affected specific UltraDNS data centers. Given the packet loss rates and patterns that we observed, a logical conclusion is that congestion on the network was a symptom, rather than a cause, of the outage, as DNS resolvers continued to retry queries to the UltraDNS service.

Collateral Damage

The UltraDNS outage impacted a number of major services. As was widely reported, Netflix suffered an outage for approximately 90 minutes. In addition, Ameritrade, eTrade, Expedia, Pornhub, Uber and Zions Bank had outages or lower availability caused by UltraDNS.

You can see the data with these interactive data sets:

- Netflix: https://fvbmuc.share.thousandeyes.com

- Expedia: https://otnzhceil.share.thousandeyes.com

- Zions Bank: https://lpvoekko.share.thousandeyes.com

Figure 5 shows DNS availability by country for Zions Bank, revealing large scale outages in the America and Eastern Europe that match the affected UltraDNS data center locations.

in the Americas and Central and Eastern Europe.

Coping with DNS Outages

How can you better respond to DNS outages? First off, make sure you monitor your key services and applications. If you use ThousandEyes, the HTTP layer will show DNS errors if the name resolution fails. You’ll also want to monitor key domains and their associated authoritative servers, whether they are hosted internally or by a third party, like UltraDNS. ThousandEyes DNS Server tests will lookup your domain name servers and set up network tests to each one.

You can also alert on DNS availability, resolution time and record mappings (the actual IP address reports) to ensure that everything is up and running. Generally, DNS availability should always be over 90% (occasionally one agent can have a failure) and resolution time should be 10-30ms from most locations for hosted DNS services.

In sum, monitoring with ThousandEyes helped us gain visibility that was far more insightful than Neustar’s vague, contradictory root cause explanations. After the outage events, it’s now painfully obvious that monitoring key services and applications like DNS is vastly important. If you’re interested in using tools like DNS Server tests and Path Visualization to keep an eye on your network, sign up for a free trial of ThousandEyes.