The Internet is a global system of interconnected autonomous networks, notable for its decentralization. By design, no single organization “owns” the Internet or controls who can connect to it. Instead, thousands of different organizations operate their own networks and negotiate interconnection agreements. These agreements, often referred to as "peering,” are the backbone of the Border Gateway Protocol (BGP)—a key component of Internet routing.

Due to the Internet's autonomous nature, there is an inherent lack of control and a dependency on providers to forward and return traffic in a certain way. When BGP issues arise, inter-network traffic can be affected, leading to increased packet loss and latency to complete loss of connectivity. Subsequently, providers and enterprises may be challenged to fully control their end users’ experience.

BGP is a crucial protocol for network operators to understand for troubleshooting purposes. Using BGP route visualizations from real events, we'll illustrate four scenarios in which BGP may be a factor to consider while troubleshooting:

Peering Changes

One common scenario where BGP comes into play is when a network operator changes peering with an Internet Service Provider (ISP). Peering can change for various reasons, including commercial peering relationships, equipment failures, or maintenance. During and after a peering change, it is vital to confirm the reachability of your service from networks around the world. ThousandEyes presents reachability and route change views, as well as proactive alerts to help troubleshoot possible issues.

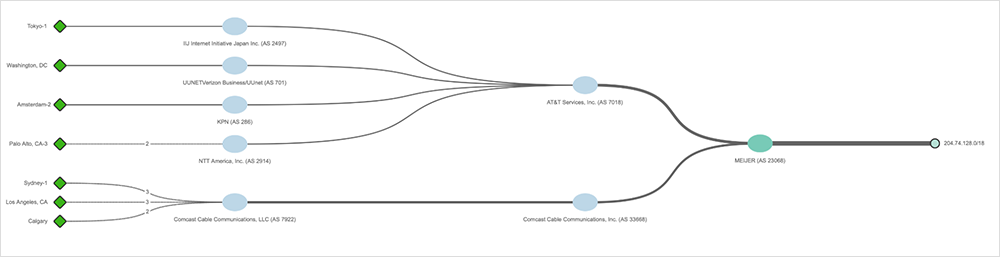

Figure 1 shows prefix 204.74.128.0/18 being advertised by AS 23068. As visible, the prefix is advertised through AT&T (AS 7018) and Comcast (AS 33668) as primary transit providers, through which it reaches other networks, ultimately reaching BGP monitors such as Tokyo-1, Washington, DC, and others.

Figure 2 shows a peering change resulting from a traffic engineering change by AS 23068. Consequently, the prefix is being withdrawn from AT&T (AS 7018), as indicated by the red striped line. Simultaneously, we can see it being propagated by Comcast (AS 7922), ultimately ensuring prefix reachability.

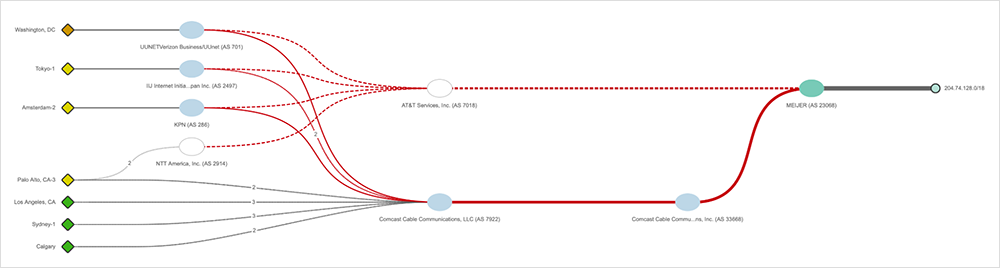

ThousandEyes enables network operators to understand exactly what happened and when. As shown in Figure 3, operators can view the details of the path changes by selecting one of the BGP monitors.

As shown in Figure 3, the Tokyo-1 BGP monitor observed the initial path, indicating that AS 23068 originated the prefix. The prefix was advertised to AT&T (AS 7018), through which it propagated to AS 2497, where a BGP monitor is hosted. At 23:00 UTC, the Tokyo-1 BGP monitor observed the change in the path. The prefix, which originated from the same AS 23068, got advertised to Comcast (AS 33668). From there, it was propagated to a different Comcast AS (AS 7922), TATA (AS 6453), and ultimately reached AS 2497 where the BGP monitor is hosted.

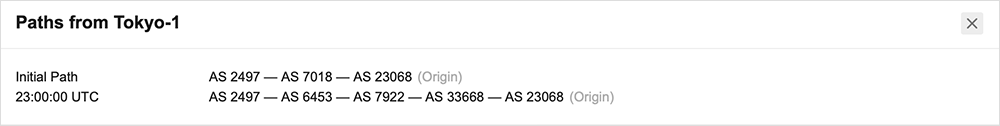

After peering changes, BGP Route Visualization indicates that prefix reachability is solely achieved through Comcast, as visible in Figure 4.

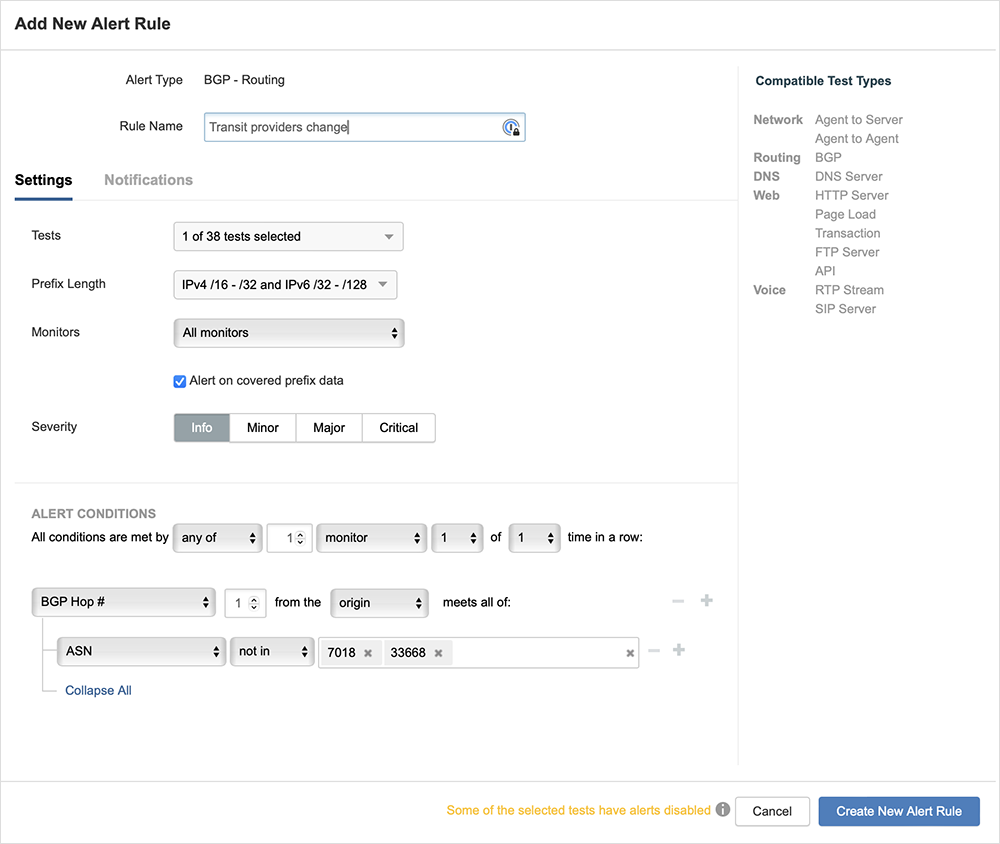

In addition to offering profound insights into events like the one mentioned, ThousandEyes provides comprehensive capabilities to alert users about peering changes, as illustrated in the example above. Creating an alert rule for the scenario described is as straightforward as following the steps outlined in Figure 5 below.

As depicted in Figure 5, an alert rule was configured to notify if any BGP monitor detects a first hop from the origin (usually a transit provider or private peers) where an Autonomous System Number (ASN) is different from the configured value. In our example, if the BGP monitors observe a change where AT&T (AS 7018) or Comcast (AS 33668) are not the Autonomous Systems on the first hop from the origin, the alert rule would be triggered immediately. Alerts can be sent via email, custom webhooks, or any integrations we support, such as Slack, PagerDuty, ServiceNow, etc.

Route Flapping

Route flapping occurs when routes alternate or are advertised and then withdrawn in rapid sequence, often resulting from equipment or configuration errors. Flapping often causes packet loss and results in performance degradation for traffic traversing the affected networks. Route flaps are visible in ThousandEyes as repeating spikes in route changes on the timeline.

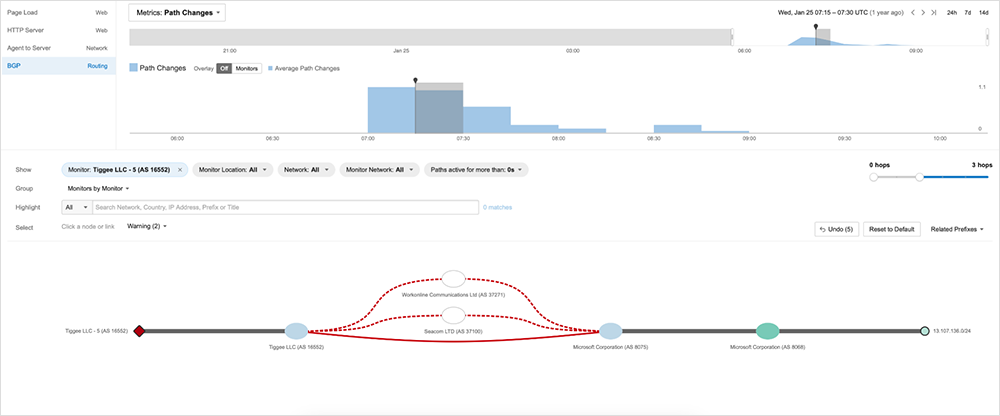

Figure 6 shows ThousandEyes BGP Route Visualization. As visible on the timeline, the prefix 13.107.136.0/24 experienced a significant number of path changes over an extended period of time. The visualization indicates that Microsoft (AS 8075) is advertising the prefix to AS 16552, while the same prefix was withdrawn from AS 37271 and AS 37100 within the selected time frame.

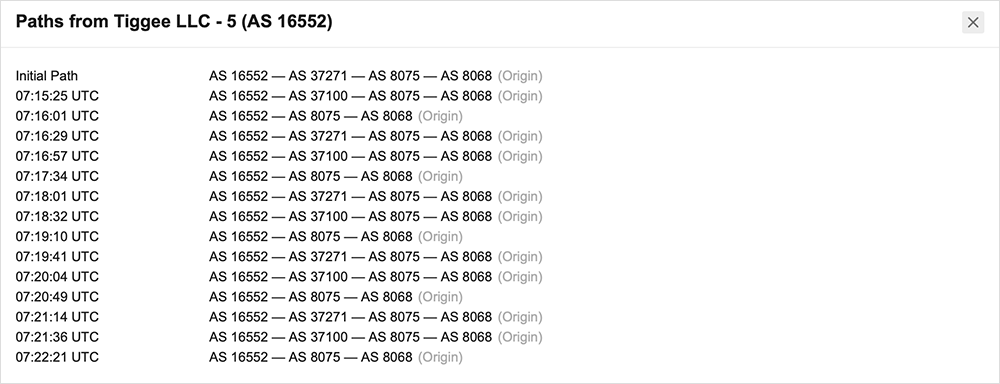

ThousandEyes can expose more information about prefix activity by selecting 'View details of path changes' under one of the available BGP monitors (Tiggee LLC - 5, in the example above). Figure 7 reveals extensive BGP route flapping.

As shown in Figure 7, Microsoft experienced route flapping, during which they advertised the prefix from their AS 8068 to their AS 8075. Subsequently, they advertised the prefix externally to AS 37271. From there, it reached AS 16552, where the BGP monitor is hosted. Shortly after that, we observed a routing change, where upstream AS 37271 was replaced by AS 37100. Following this change, the shortest path was established through private peering between Microsoft’s ASN 8075 and AS 16552, where the BGP monitor is hosted. This pattern repeated multiple times, not only during the selected time frame on the timeline but throughout the entire event.

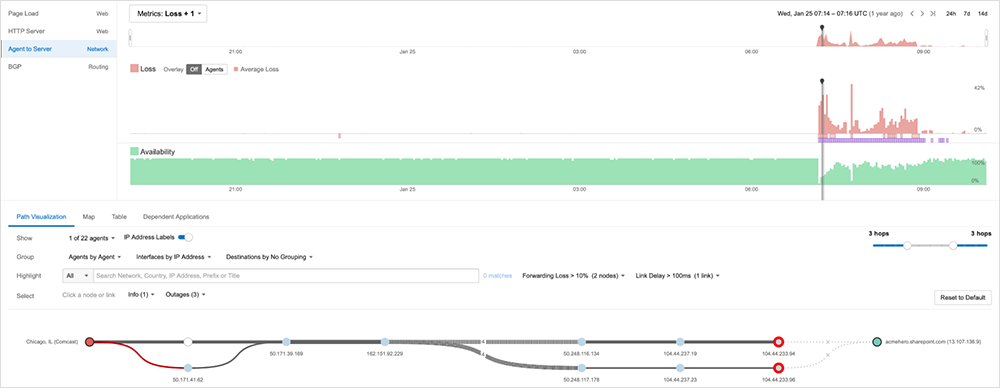

As mentioned earlier, control plane events like this one typically result in packet loss on the data plane, and this event was no exception. Figure 8 illustrates the Route Visualization for this specific event, highlighting a significant amount of packet loss.

Figure 8 illustrates a significant amount of packet loss, emphasizing the adverse impact of packet loss on application health. The HTTP Availability metric is displayed in green. While we observe a spike in packet loss due to BGP route flaps, we also note a corresponding decline in HTTP Availability during the event.

Route Hijacking

Route hijacking occurs when a network advertises a prefix that it does not control, either by mistake or to deny service or inspect traffic maliciously. Since BGP advertisements are generally trusted among ISPs, errors or improper filtering by an ISP can be propagated quickly throughout routing tables around the Internet. As an Autonomous System operator, route hijacking is evident when the origin AS of your prefixes changes or when a more specific prefix is advertised by another party. In some cases, the effects may be localized to only a few networks. But in severe cases, hijacks can affect reachability from the entire Internet. You can set alerts in ThousandEyes to notify you of route changes or advertisement of new prefixes.

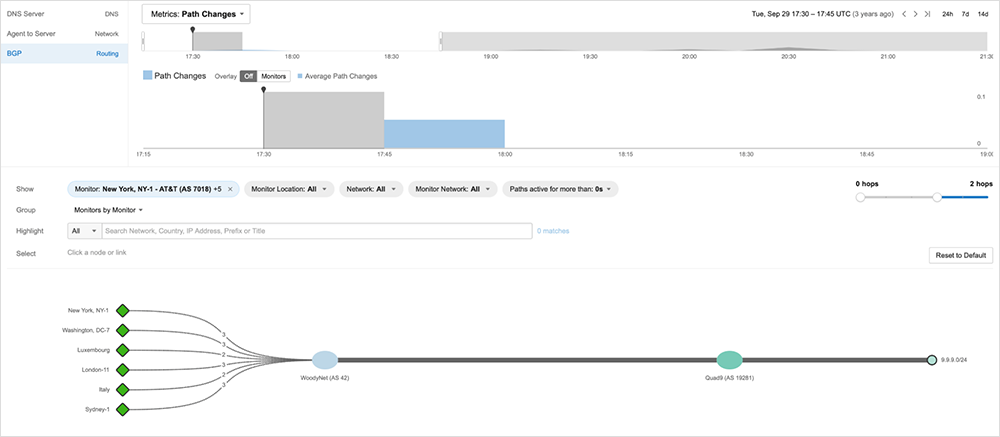

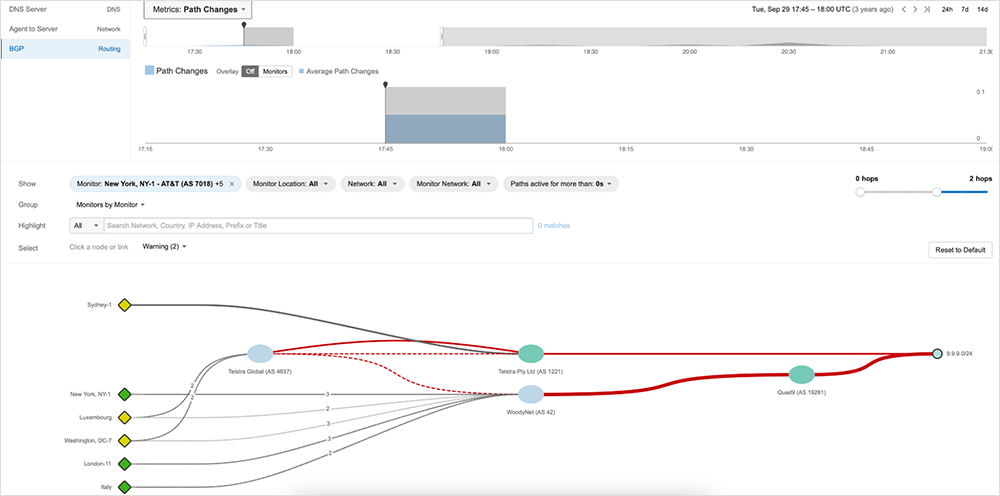

Figure 9 depicts the 9.9.9.0/24 prefix advertised by the widely used DNS provider, Quad9 (AS 19281). Everything is functioning as anticipated within the specified time frame on the timeline.

Shortly thereafter, as evident in Figure 10, Telstra (AS 1221) commences advertising of the 9.9.9.0/24 prefix. In essence, they have successfully hijacked Quad9's prefix. A solid red line denotes the prefix advertisement. Several networks have accepted the advertisement, leading to alteration in the path, observable in the transition of color from green to yellow across various BGP collectors.

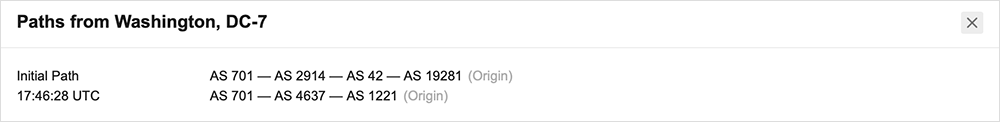

Examining the specifics of path alterations from the viewpoint of one of the impacted collectors reveals that the prefix was hijacked, as illustrated in Figure 11.

As seen in Figure 11, it is evident that the prefix was initially advertised by Quad9 (AS 19281). The networking devices along the path to our monitor, at 17:46:28 UTC, subsequently accepted a new path originating from Telstra (AS 1221), which clearly denotes hijack.

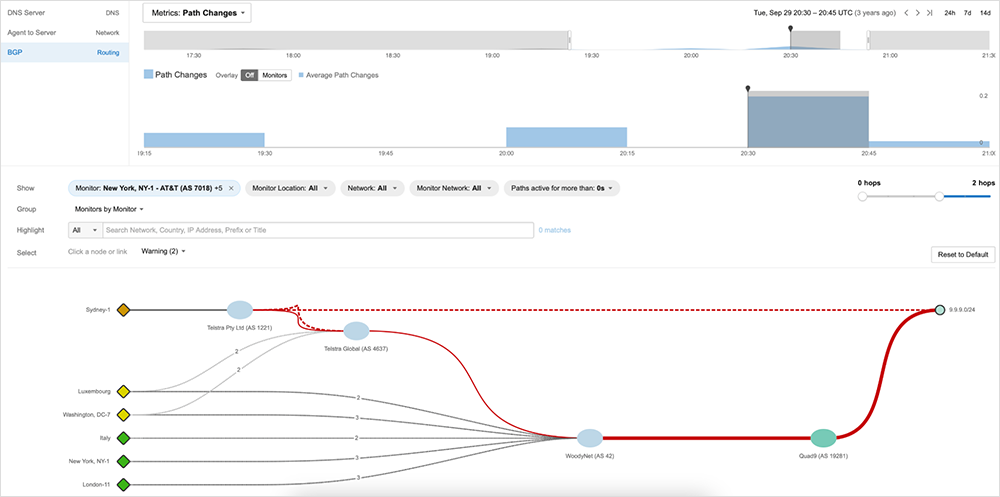

After an extended period, Telstra withdrew the prefix, as depicted in Figure 12 and indicated by the striped red line.

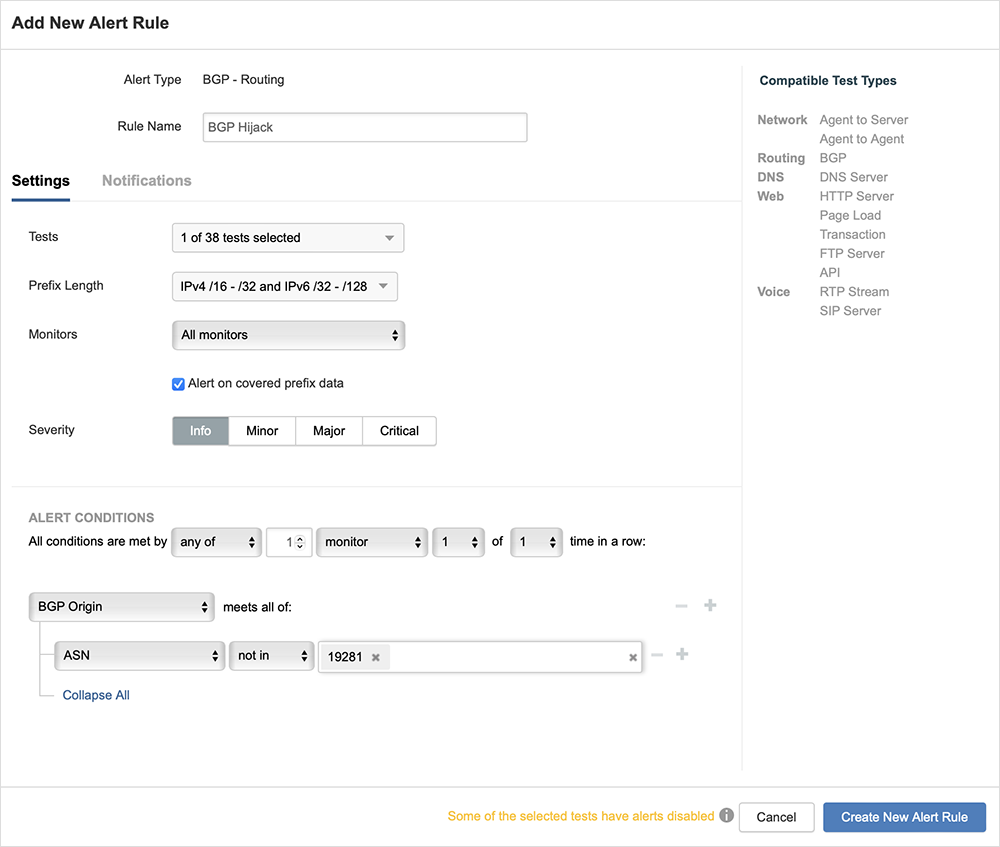

From an operational standpoint, monitoring and promptly alerting on issues like this are crucial. ThousandEyes provides comprehensive alerting capabilities for various scenarios, including hijacks. Using the example above, creating an alert rule to notify on prefix hijacks is as straightforward as following the steps outlined in Figure 13.

As seen in Figure 13, the alert rule would be triggered as soon as any BGP monitor detects the advertisement of a prefix with an origin Autonomous System different from the one specified in the alert rule. Implementing alert rules like this ensures a timely alert on the issue, ultimately providing operators more time to address it.

DDoS Mitigation

For companies using cloud-based DDoS mitigation providers, such as Prolexic and Verisign, BGP is a common way to shift traffic to these providers during an attack. Monitoring BGP routes during a DDoS attack is critical to confirm that traffic is routed properly to the mitigation provider’s scrubbing centers. In the case of DDoS mitigation, you’d expect to see your DDoS scrubbing provider in the path.

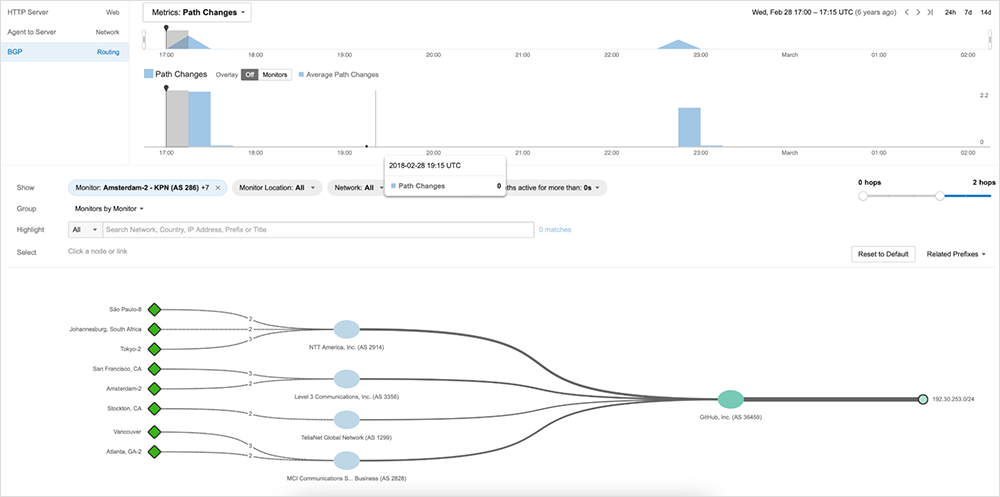

Figure 14 shows GitHub, the popular hosting service for software development, advertising one of its prefixes through several transit providers.

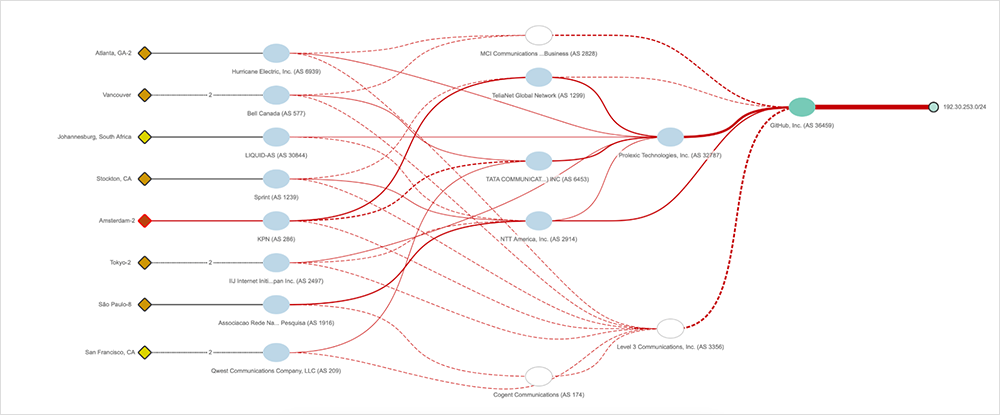

Shortly thereafter, they resorted to traffic engineering due to the 1.3T DDoS attack, one of the largest DDoS attacks recorded at the time. Figure 15 shows the effects of traffic engineering, during which they withdrew their prefix from different transit providers and started advertising it exclusively through their DDoS scrubbing provider, Prolexic Technologies (AS 36459).

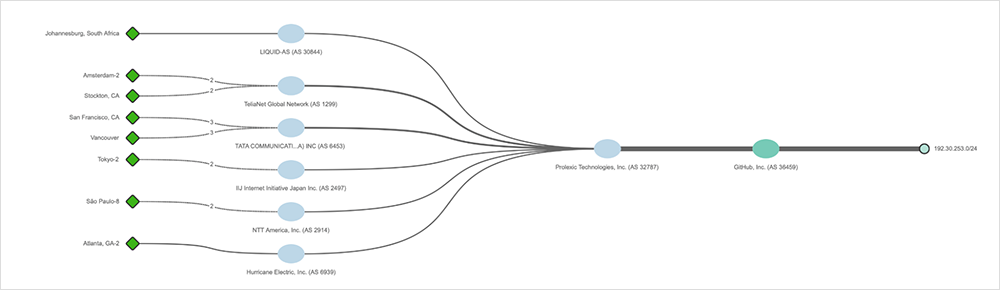

Once the traffic engineering was successfully executed, all the traffic was routed through the DDoS scrubbing provider, Prolexic Technologies (AS 32787), as visible in Figure 16.

Learning More

Monitoring and BGP troubleshooting are crucial parts of managing most large networks. Visibility of BGP route changes and reachability is a powerful solution for operators to correlate events and diagnose root causes. For more information about tracking and correlating BGP changes with ThousandEyes, sign up for a free trial of ThousandEyes.