On Friday, July 17th, at approximately 2:15PM PDT, Cloudflare’s DNS services—which are much broader in scope than most realize—had a significant disruption that impacted approximately half of their infrastructure. The incident resolved less than 30 minutes later, but while ongoing, it caused a massive impact on Internet users, not only attempting to reach domains managed by Cloudflare but any domain through their public resolver. While a quickly released post-mortem identified a router configuration error as the root cause of the issue, several aspects of the incident point to a broader update that impacted its DNS service beyond the internal BGP route leak.

Cloudflare’s DNS services are extensive and underlie the reachability of many parts of the web. Not only do they manage DNS records for some of the largest and most well-known enterprises, as well as many smaller entities, but they also offer a public resolver—1.1.1.1—that has gained popularity since its launch in 2018. This service is one of the trusted DNS over HTTPS (DoH) resolvers that Mozilla has begun making default in its Firefox browser. Cloudflare’s infrastructure also supports two of the thirteen DNS roots, E and F—which sit at the apex of the DNS hierarchy, servicing all Internet users.

The incident reportedly impacted eighteen of Cloudflare’s data centers:

- San Jose

- Dallas

- Seattle

- Los Angeles

- Chicago

- Washington, DC

- Richmond

- Newark

- Atlanta

- London

- Amsterdam

- Frankfurt

- Paris

- Stockholm

- Moscow, St. Petersburg

- São Paulo

- Curitiba

- Porto Alegre

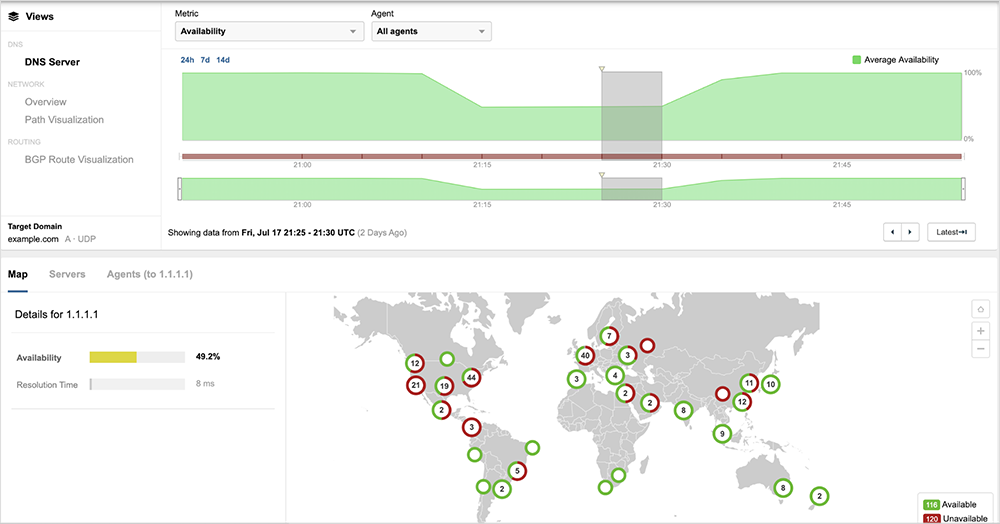

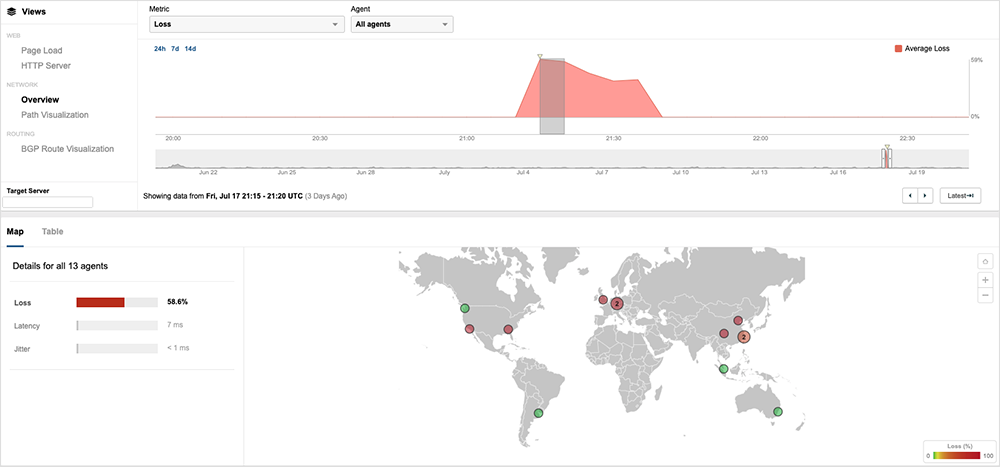

ThousandEyes observed the incident from hundreds of global vantage points across multiple parts of its DNS service. Starting at approximately 2:10PM PDT, approximately half of the vantage points monitoring the service were unable to reach Cloudflare’s public resolver at 1.1.1.1 to resolve the A record for example.com.

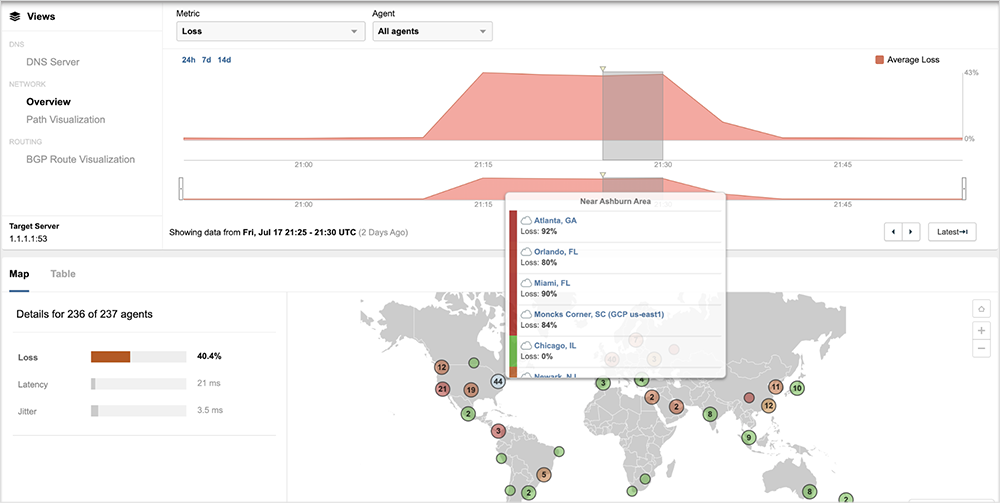

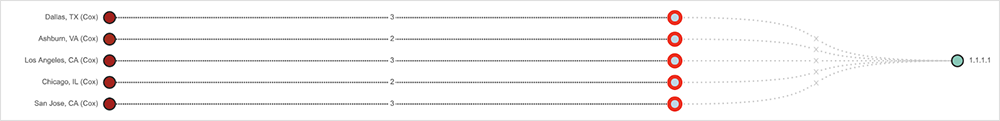

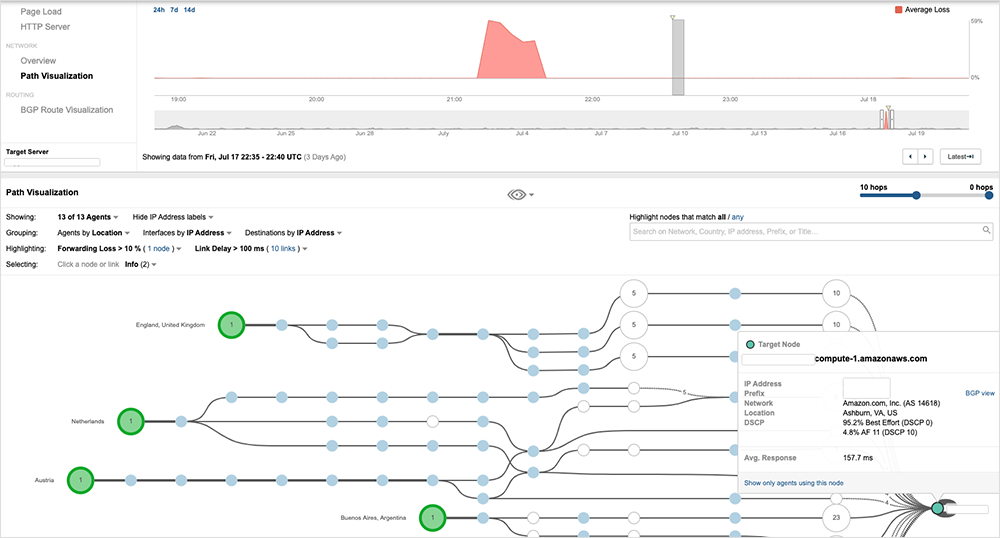

The alternative address to the service, 1.1.1.2, was also similarly constrained, as were its IPv6 addresses. Looking at the network path for impacted locations, extremely high levels of packet loss are evident at Cloudflare’s network edge.

Users connecting to Cloudflare through US broadband providers experienced different levels of availability. Vantage points located near affected PoPs and connected to providers such as Verizon and Cox Communications were not able to reach Cloudflare DNS services during the incident.

However, not all users located near impacted Cloudflare data centers were impacted. Vantage points in Comcast’s network in Ashburn and Dallas—two of the locations Cloudflare noted in its post-mortem—were able to connect to Cloudflare’s DNS servers, as they were routed to unaffected sites. Given that the 1.1.1.1 resolver is Anycast, variations in routing can sometimes lead to a user connecting to a site further away than the closest available. In this case, routing to a “less optimal” site enabled those users in those locations to maintain service reachability.

DNS Roots See Similar Impacts

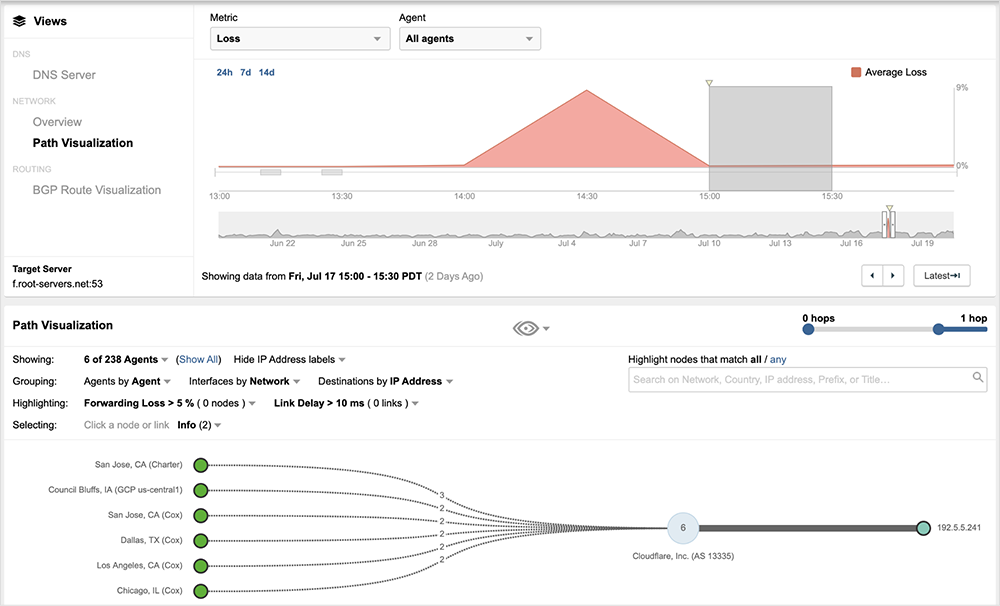

F and E DNS roots, both of which Cloudflare supports to some degree, also experienced disruptions similar to its core services. The impact began sometime after 2PM PDT, and by approximately 2:30PM PDT, traffic loss was detected in reaching both the F and E roots.

Edge Network Impacted Beyond DNS Services

There is some indication that the incident also disrupted other Cloudflare edge services, though this did not appear to be as widespread as the DNS disruption. ThousandEyes observed a Cloudflare-hosted service experiencing near-identical disruption during the incident, with extremely high levels of packet loss at Cloudflare’s network edge preventing reachability of the service.

It’s unclear if these edge services were a collateral impact of the outage, or if internally leaked prefixes were broader than those covering its DNS services.

Outage Ripple Effects

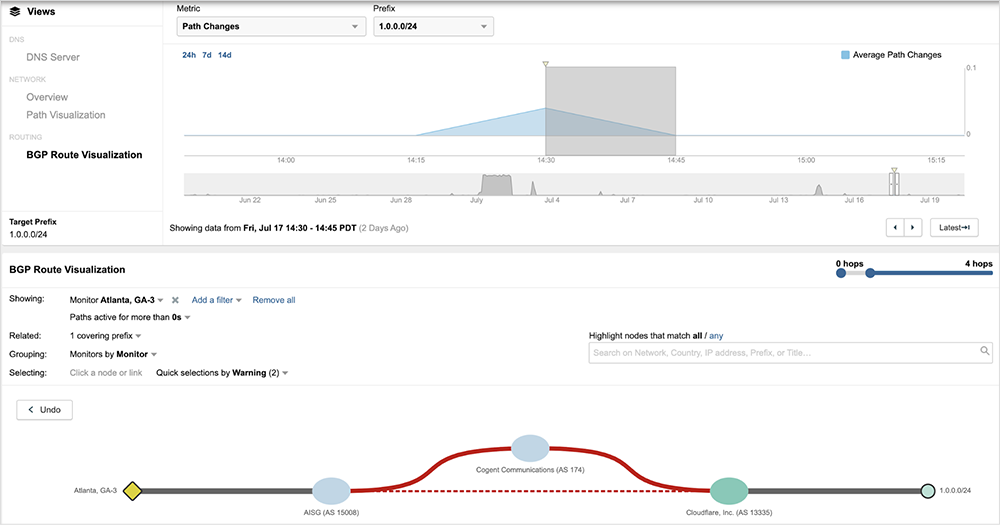

As the outage began to resolve, ThousandEyes observed several “adjustments” being made by its customers and neighboring service providers. In one instance, AISG, a provider directly connected to Cloudflare, revoked its direct routes to Cloudflare for prefixes covering its DNS services, instead steering traffic indirectly through Cogent Networks.

Route changes of this kind are commonly made as a remediation measure to change the path to an impacted service. In this case, AISG may have been hoping the indirect route through Cogent would have directed traffic to a Cloudflare site that was not impacted. Nine hours later, AISG reverted to their pre-outage state and started routing directly to Cloudflare.

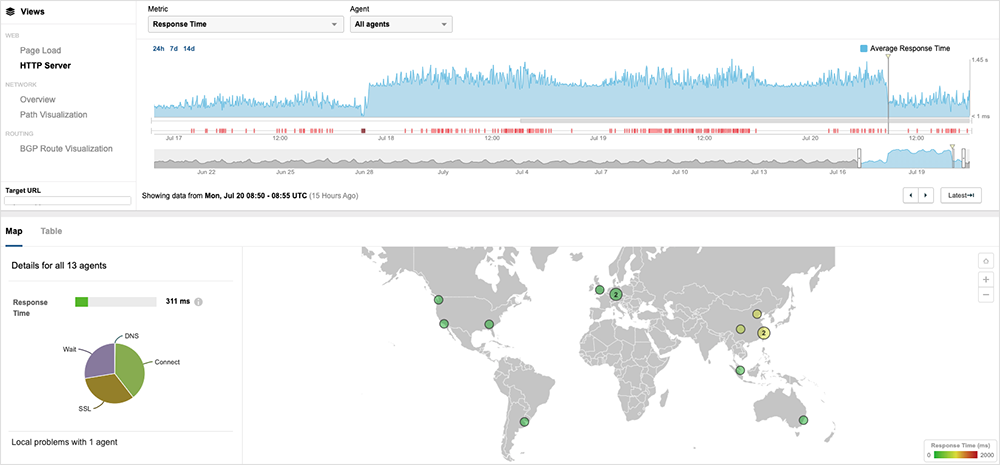

Around the same time, a customer of Cloudflare made a change to its serving architecture, routing users directly to its origin hosted within AWS. Throughout the weekend, users from some locations connected to the AWS origin, while others connected to Cloudflare’s CDN edge.

This change led to higher HTTP server response times to the site; however, the impact to its enterprise customers was likely minimal given that the change was in place over the weekend.

The following Monday, July 20, this customer reverted all traffic to Cloudflare. Cloudflare’s root cause analysis (RCA) statement alluded to a scheduled fix on their backbone network on Monday, July 20th, which may explain the delay in switching all users back exclusively to Cloudflare’s edge.

Takeaways

The Internet is a global system of interconnected autonomous networks that is notable for its decentralization; however, the extreme centralization of critical, foundational services, such as DNS can lead to major and far-reaching impact. Enterprises and users rely on a complex, often unseen, web of dependencies, any of which can impact the reachability of its services. Understanding these dependencies and where additional resilience measures are needed is critical to maintaining business continuity.

Visibility into critical service components, be it your DNS infrastructure or your CDN provider, along with Internet routing visibility is critical in understanding architectural risks, as well as the impact of outages on service delivery. Armed with this information you can not only understand the “why” behind the oft-repeated phrase the “Internet is down” but also put yourself in a position to expedite communication and remediation in the future.