In a previous blog post, we covered the reasons why we embarked on releasing a Public Cloud Performance Benchmark Report, the breadth and depth of data gathered, and how the report can help IT leaders and architects to make data-driven decisions. In this post, we’re going to explore a couple of the more interesting phenomena we discovered through the report data collection.

Very High Performance Variability for AWS in Asia

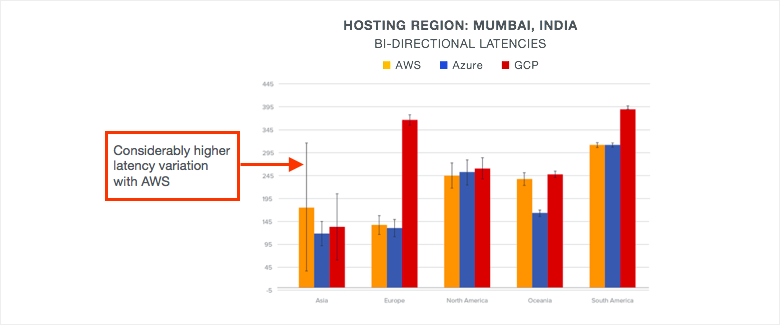

We knew we were seeing something interesting when we noticed the long black line at the far left of this network latency graph in Figure 1. The graph shows latency from geographical user regions at the bottom to the Mumbai regions of AWS, Azure and GCP. The black line represents the standard deviation in network latency. Note that for most regions and providers, the black lines are relatively short, meaning that whatever the measured network latency from those locations remained in a relatively tight range over 30 days.

But for user locations in Asia connecting to the AWS region, the standard deviation is dramatically higher. This means that for those locations, performance predictability is very poor. Why was this happening?

So, we looked at the network paths for these latency measurements and discovered an interesting phenomenon. The paths from Azure and GCP were entering their respective backbones close to the user locations. However, with AWS the paths were largely transiting the public Internet from the user locations, only entering the AWS backbone closer to the region location.

The Backbone Less Traveled

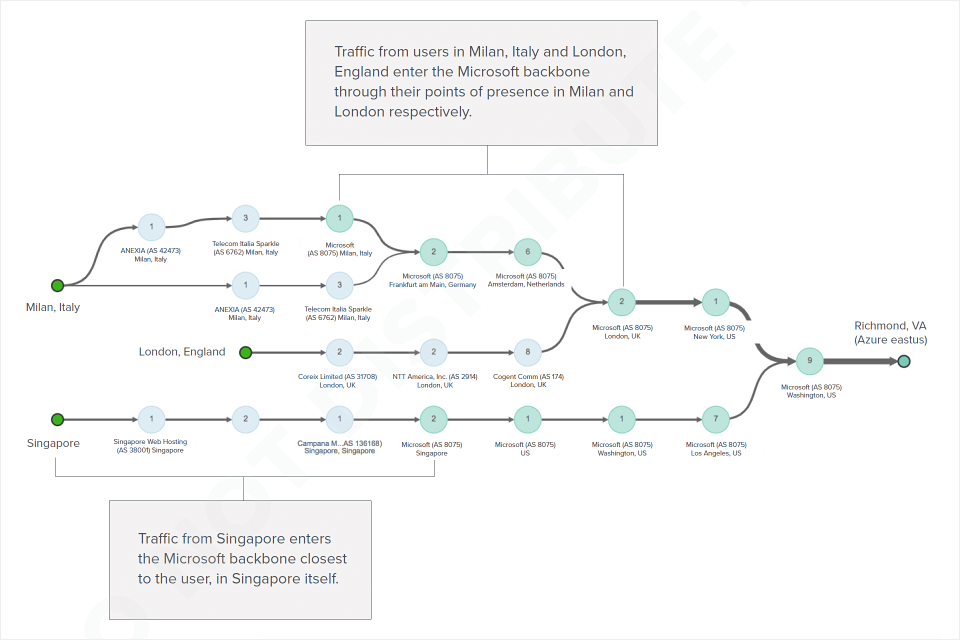

This behavior was different enough that we decided to look to see if this was a broad pattern or just a fluke in Asia, and it turns out that this is the norm. In Figure 2 you can see the ThousandEyes path visualization coming from Milan, London and Singapore (represented by the dark green dots on the left) to the Microsoft Ashburn region. Note that the paths goes through relatively few Internet hops (shown by the light blue circles) and enter the Microsoft backbone close to the user locations. The path from Milan enters the Microsoft backbone in Milan, the path from London enters in London and the path from Singapore enters in Singapore. This means that the Microsoft backbone is transporting the traffic for most of the geographical distance to the target regions.

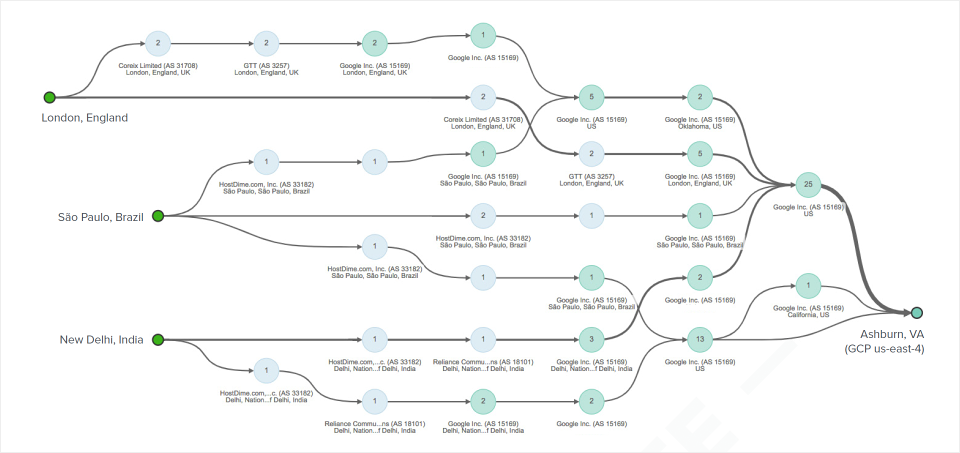

In Figure 3, we can see paths from London, Sao Paulo and New Delhi to the GCP Ashburn region. Again, note that the paths traverse relatively few light blew Internet hops and enter respectively in London, Sao Paulo and Delhi, transiting the Google backbone most of the way.

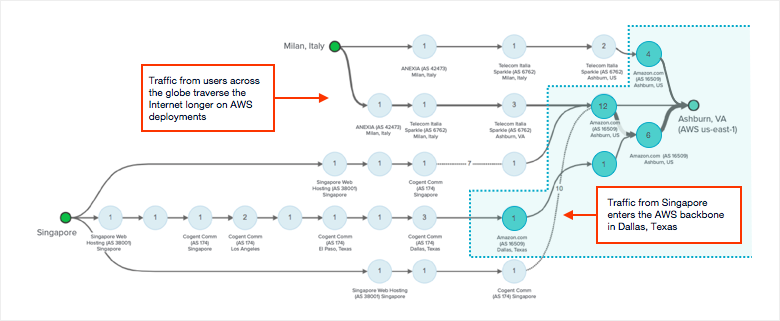

These two examples stand in stark contrast to what we see in Figure 4, showing paths from user locations in Milan and Singapore on the left to the AWS Azure Region on the right. Note that for both Milan and Singapore, traffic transits the public Internet most of the way, only entering the AWS backbone in Ashburn.

As we all know, the Internet is a best effort network. Furthermore, in places where the Internet is less mature, such as in Asia, we’ll tend to see higher variability in performance. This is why connectivity to AWS incurred such high standard deviations in latency over our 30 day measurement period. We were frankly surprised by this, as were many analysts and IT leaders that we briefed. It just goes to show you that there’s nothing like having real, metric data to understand the cloud. The Public Cloud Performance Benchmark Report is the first network performance study of the Big 3 providers at its breadth and depth. To find out more about why we produced the report and the size of the metric data gathered, check out the previous blog post.

AWS circumvents this inconsistency through a new service (AWS Global Accelerator) that was announced at re:Invent (2 weeks after the report was published). Hop over to my last blog post to find out how.

We also observed some other interesting network performance phenomena in various parts of the world, which you can find out by downloading the full report.

Ultimately, cloud architectures are complex and every organization has to make its own choices about cloud services, pricing and performance. The Public Cloud Performance Benchmark Report is a resource for IT leaders and architects to reference in formulating their cloud strategies and connectivity architectures. As mentioned though, there’s nothing like having real data at your fingertips. So, if you need this type of visibility on an ongoing basis (a great idea!), reach out to arrange a discussion of your needs and a request a demo of ThousandEyes today.