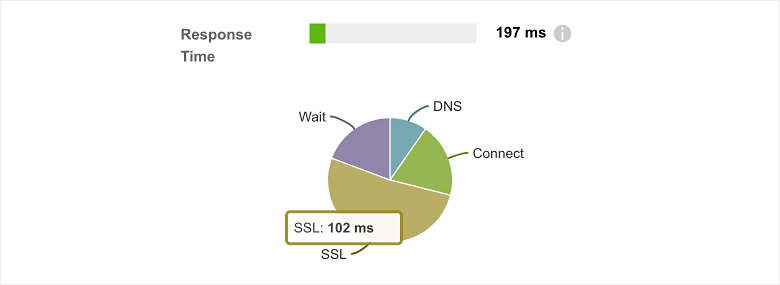

Website performance depends on a number of external factors such as network latency, server load and the use of a CDN. A good metric of a website’s performance is Response Time, which measures how quickly a web server is able to respond back to a client’s request for a web resource and factors in multiple external dependencies such as DNS resolution, network latency, CDN proxies and server load. Response Time is also called the Time to First Byte (TTFB) and is defined as the sum of DNS time, TCP Connection time, SSL time, and Wait time.

The SSL phase of an HTTP GET request can often be the slowest component of response time because the SSL handshake requires two network round-trips before encrypted data transfers can take place. There’s a great discussion on this topic in our blog post on the anatomy of an HTTP(S) request. One of the primary goals of TLS 1.3 is to address this inefficiency within the TLS handshake by reducing the network round trips required to encrypt a session and simplifying cipher negotiation.

Why TLS Handshake Time Matters

Security and performance have historically been seen as tradeoffs. Enforcing stricter web security requirements have traditionally been tied with higher load on clients, servers, and networks. As HTTPS has become the de-facto standard for all web communication over the last few years, this extra cost of ensuring web security has been a necessary but problematic component for businesses looking to expand their digital footprint. To quantify the impact of slow SSL times, imagine a user in London, UK trying to access a service that is hosted in San Jose, CA. Every network round-trip adds approximately 150ms of latency to a web request. A TLS handshake requires two round-trips adding up to 300ms for every new HTTPS session. Combined with DNS resolution and TCP connection time it could take well over 500ms before the first byte of content can be transferred. According to a study by researchers at Microsoft, an additional 250ms of delay resulted in a 1.5% decrease in bing.com revenue. This has caused a healthy debate in the Internet Security community around the need to revisit the TLS handshake process (among other security enhancements) to provide better security in a shortened negotiation cycle. TLS 1.3 accomplishes just this.

TLS 1.2 and Before

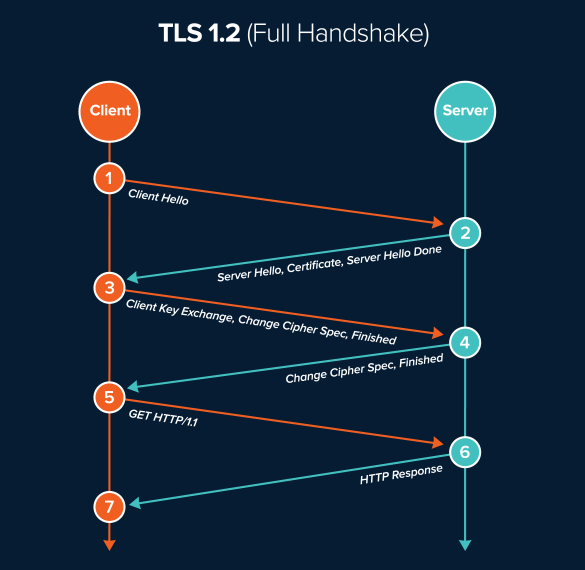

The TLS handshake process ensures appropriate cryptographic information is shared between client and server before secure data transfers can take place. In TLS 1.2 and earlier, the starting assumption is that the client knows nothing. The first round trip is spent learning the server’s capability so that the client and server can agree on how to share the master secret used to encrypt the data transfer. As shown in the diagram below, this first round trip of the SSL handshake comprises of the Client Hello (that advertises supported client cipher suites including the key sharing options) and the Server Hello (that includes server certificate containing the public key and the chosen cipher suite).

The second round trip ensures that client and server securely exchange the master secret, which is necessary to encrypt/decrypt the application data that is subsequently sent. This process of securely exchanging a shared secret is typically accomplished using public key cryptography where the client and the server use a public-private key pair. During the TLS 1.2 handshake, the server sends its public key as part of the Server Hello. The client generates a master secret and uses the server’s public key to encrypt the secret before sending it on the network, thereby ensuring the shared secret is communicated securely to the server (this process is also called an RSA key exchange). This additional round-trip to arrive at a shared secret allows both the client and server to utilize the same key for encrypting/decrypting application data (symmetric key encryption). This is highly desirable for its performance benefits: symmetric encryption is over 250 times faster than asymmetric encryption.

Improvements in TLS 1.3

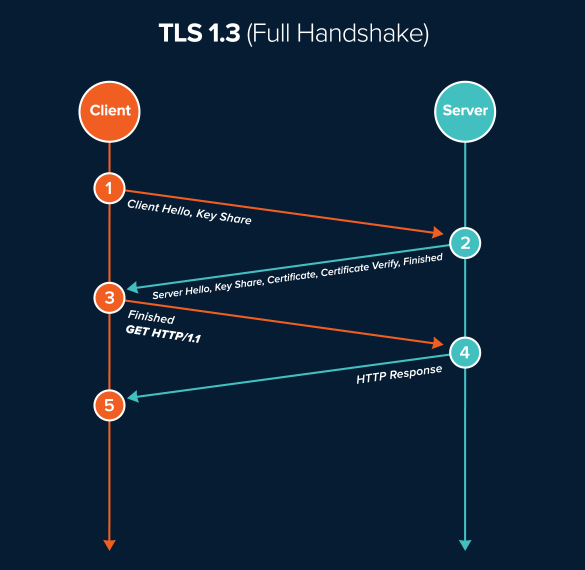

In TLS version 1.3 (expected to ship with chrome version 70), the TLS handshake will be cut down to just one round-trip for a new session, allowing SSL negotiation times to be cut in half. The condensed TLS handshake is mainly possible by allowing the client to assume a key sharing option that the server is likely to use. The end result is that the client is able to generate a pre-master secret and encrypt it with a shared public key in the very first message. Although this should not happen often, if the client is wrong at guessing the server’s capability, the server will send a HelloRetryRequest with an appropriate key share resulting in an additional round trip.

TLS Session Resumption

TLS Session Resumption tries to make the handshake process more efficient. Rather than negotiate every new session as it were new, the client attempts to reuse a previously negotiated SSL session. In TLS 1.2, session resumption is accomplished by way of session IDs or session tickets that identify a previously negotiated session. The abbreviated TLS handshake allows the negotiation to be completed in one round-trip.

According to Cloudflare, 40% of TLS sessions across its networks are existing session resumptions. So a significant portion of web traffic stands to benefit from this accelerated negotiation.

While faster than new TLS sessions, resuming an existing TLS session still incurs a one round trip latency “cost”. TLS 1.3 improves upon this by eliminating the handshake. Also called 0-RTT session resumption, it not only assumes the key sharing option, but also reuses an existing Pre-Shared Key, making session IDs and session tickets obsolete. This was primarily inspired by the QUIC protocol developed by Google.

0-RTT Impact On Security and SD-WAN

However, 0-RTT session resumption comes with its challenges. Since there is no interactivity between client and server during 0-RTT resumption, anyone with access to your encrypted 0-RTT packet can replay the packet to the server pretending to be you. This can have a major security impact, especially in the case of e-commerce and digital banking. The final approved standard for TLS 1.3 (RFC 8446) recommends some best practices to counter against replay attacks which include only allowing 0-RTT resumption for idempotent requests (HTTP GETs).

Session resumption in TLS 1.3 also has a potential impact on SD-WAN implementations that rely on Server Name Indication (SNI) policies to route traffic to the desired WAN circuit. Since the session resumption traffic will encrypt the SNI using the Pre-Shared Key obtained during the initial TLS handshake, SD-WAN routers will not have the capability of “reading” the SNI to appropriately route traffic.

A Step Forward

Overall, TLS 1.3 should eliminate the last few points of friction for web admins to embrace TLS. Along with the move by Google Chrome to mark all non-TLS sites as “Not Secure”, we hope this steers the Internet towards a more secure future.

Curious about how SSL negotiation factors into your web performance? Start your free 15 day trial of ThousandEyes and see within minutes.