On June 12th, a major BGP route leak occurred with wide geographic impacts across dozens of important internet services. Because Internet routing is determined in a distributed fashion (unlike DNS), the Border Gateway Protocol (BGP) exists to communicate routing information between the networks of the Internet. Networks, called Autonomous Systems (AS), advertise routes among one another, in the process learning the route that traverses the fewest networks (the AS Path). Because connections between networks change due to both physical links and commercial relationships, networks are constantly learning new BGP advertisements and passing them along to their neighboring networks.

June 12th Route Leak from Telekom Malaysia

At 1:43 am PDT (8:43 UTC), Telekom Malaysia (AS 4788) announced a large portion of the global routing table, which had the effect of routing traffic destined for other networks to Telekom Malaysia instead. This is what is known as a route leak, or an inadvertent and incorrect advertisement of routes by an Autonomous System.

Making matters worse, Level 3 / Global Crossing network (AS 3549) accepted these routes and advertised them to Level 3’s peers. New traffic, following these routes into Level 3 and Telekom Malaysia, inundated the Level 3 network and major peering points. And because Level 3 is one of the largest Tier 1 networks, this had the effect of extending the impact around the world. The impact on the Level 3 network was severe, with high levels of packet loss and terminal routes in POPs including Amsterdam, Chicago, Denver, Frankfurt, Houston, Las Vegas, London, Los Angeles, Miami, Minneapolis, San Jose, Seattle, and Washington DC. By 3:45 am (10:45 UTC), two hours later, Level 3 stopped accepting the routes from Telekom Malaysia and service began returning to normal.

This leak affected a large number of prefixes (ranges of IP addresses) belonging to internet services including Google, Microsoft, LinkedIn, AOL, Reddit and Dow Jones. And because routing affects all traffic to a given IP address block, the outages and performance degradation was typically widespread, knocking out everything from SaaS applications to social media websites and online banking applications.

Google: Taking a Detour

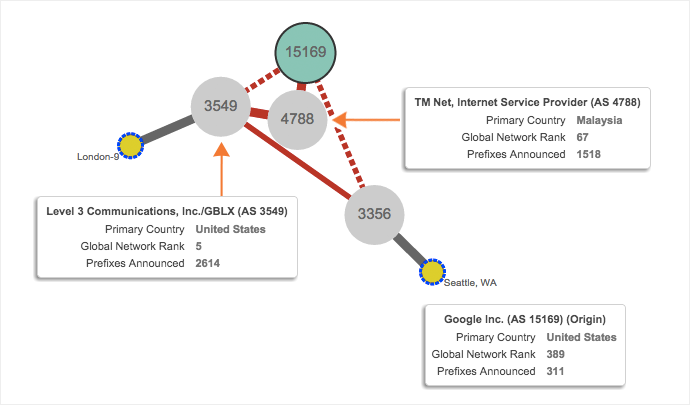

The route leak affected a range of Google services, including Snapchat, Google Apps and Google’s ad network. They share many of the same prefixes that were impacted, including dozens of /14s, /16s, /18s and /24s. Customers of Level 3 saw routes that now included Telekom Malaysia (AS 4788). Figure 1 shows the AS Path, or sequence of networks that some users in London and Seattle saw when accessing Google’s network. However, since Google peers with many networks around the world, this AS Path was not as short as the one directly to Google and did not seriously impact performance. Follow along with the full data set for Google’s ad network here.

and Level 3 GLBX (AS 3549) at 1:43 am PDT (8:43 UTC) on June 12th.

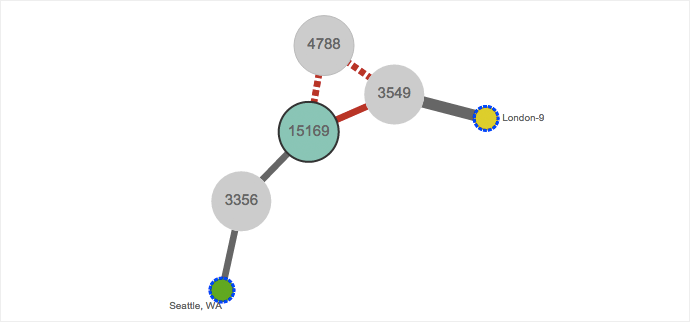

Figure 2 shows routing returning to normal, but not for more than two hours, as Level 3 stopped advertising routes via AS 4788.

Capital One: Collateral Damage

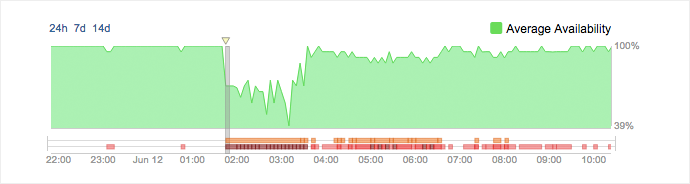

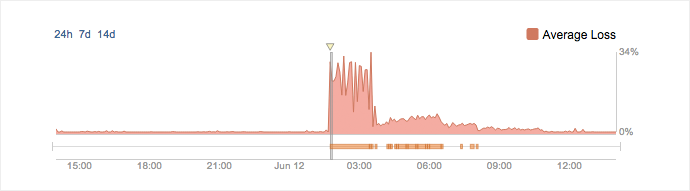

Even for services where routing did not change, there were large disruptions to service. Capital One’s website, part of ING Direct’s (AS 23551), has Level 3 as a primary ISP. Because of this, congestion in the Level 3 network prevented many Capital One users around the United States from accessing the service. Figures 3 and 4 show availability and packet loss to Capital One’s homepage. Follow the Capital One data set and visualizations here.

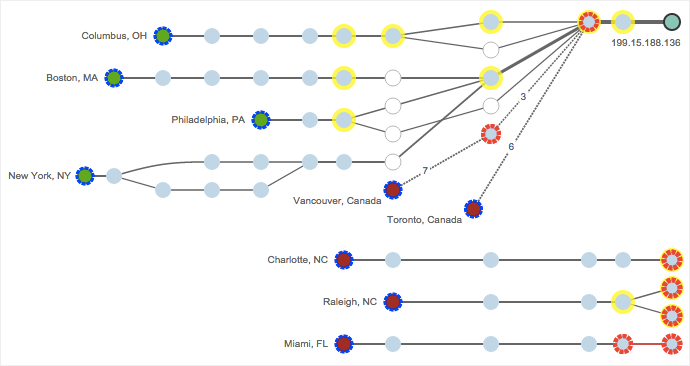

Even though Capital One’s routes did not change, congestion in major Level 3 POPs around the world made it slow or impossible to access their network. Figure 5 shows congestion through the Philadelphia POP (top red circle) as well as traffic that never made it through the Level 3 Charlotte and Washington DC POPs (terminal routes at the bottom).

in Philadelphia and terminal routes in Charlotte and Washington DC.

Staying on Top of Route Leaks and Outages

For more on route leaks, check out the post on Finding and Diagnosing BGP Route Leaks which discusses additional examples. And also look into the post on Proactive BGP Alerting, to see how you can easily detect reachability issues, route changes and prefix changes. If you’re interested in monitoring routes to network, detecting issues in upstream ISPs and setting up precision-guided alerts, sign up for a free trial of ThousandEyes today.