The summer of 2018 at ThousandEyes has been a busy one with trade shows, bi-weekly product releases and our very own networking event ThousandEyes Connect. ThousandEyes Connect is a half day live event showcasing tech talks from IT leaders on tackling tough cloud challenges. This year's theme at the Santa Clara event was focussed on the public cloud and how to manage and operate in a multi-cloud environment. Atlassian, the mastermind behind Jira and Confluence, delivered an engaging talk on how to manage the performance of critical applications in AWS. In today’s blog post we summarize Atlassian’s presentation.

Benjamin McAlary has been with Atlassian for over 4 years now and is responsible for building and maintaining the massive networking infrastructure that seamlessly manages over 15 distinct products from Jira to Confluence to Trello. Ben kickstarted the session by providing an overview of the Atlassian network, its evolution and the role of ThousandEyes in managing the network.

Atlassian's Network

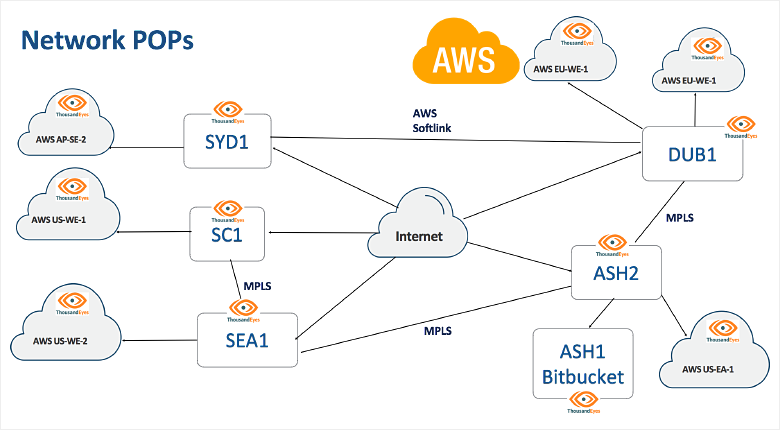

A few years ago, Atlassian’s network was quite simple. Two major data centers hosting their customer-facing SaaS apps were connected through a single MPLS backbone link with basic static routing. But, a growing customer base meant that the networking team had to reconsider this architecture and build one that was more robust, redundant and global.

Before getting into the details of how Atlassian distributes its workloads in the public cloud and builds a reliable network that is heavily dependent on AWS, Ben addressed the challenging requirements of building a network. He says “Application and micro-services team expect a fully meshed connectivity to API’s and services, with ubiquitous availability, absolutely minimal latency and maximum possible bandwidth. At the same time, the business wants all of this at the most optimal cost.” As Ben articulated these nearly impossible characteristics of what constitutes a “good network”, you imagine yourself in the shoes of a network engineer and cringe. Add to that the outages, P1 issue and troubleshooting war rooms - it must be hard being a network engineer in today’s cloud world!

So how does the Atlassian network look today? Atlassian relies on AWS, the most popular public cloud or IaaS provider to host their applications, and consumes over 200 AWS VPC’s across the world. Most of Atlassian’s services in the public cloud are front-ended by an edge network of servers hosted in privately managed Points-of-Presence (PoPs). These PoPs host content as close to the customer as possible, and shorten the TLS and TCP handshakes in order to optimize service delivery.

Network Monitoring and Visibility in a Distributed Cloud Environment

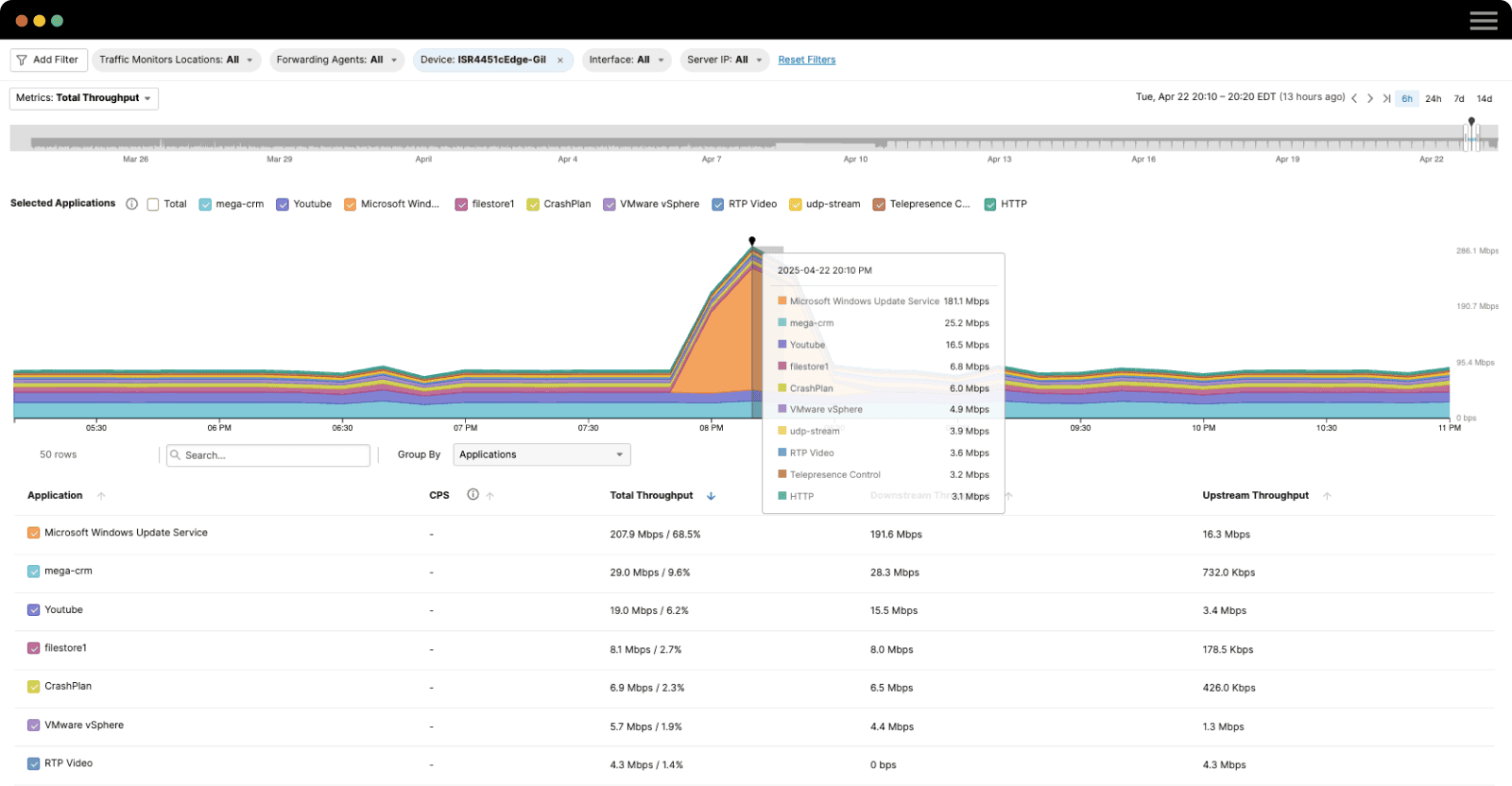

As one would expect, an environment as complex and distributed as Atlassian requires a lot of monitoring solutions. Ben mentioned that before ThousandEyes, they had Splunk processing gigabytes of logs, a heavily modified Nagios instance doing SNMP as well as SFlow, NetFlow and Pingdom. But in spite of having the who’s who of network monitoring, there was a gap in network visibility. Ben quotes “Tools like Pingdom would tell us that a service is either up or down but nothing more than that. We had a pretty good monitoring coverage but we were missing actual end-to-end visibility, the kind ThousandEyes provides.”

ThousandEyes Deployment

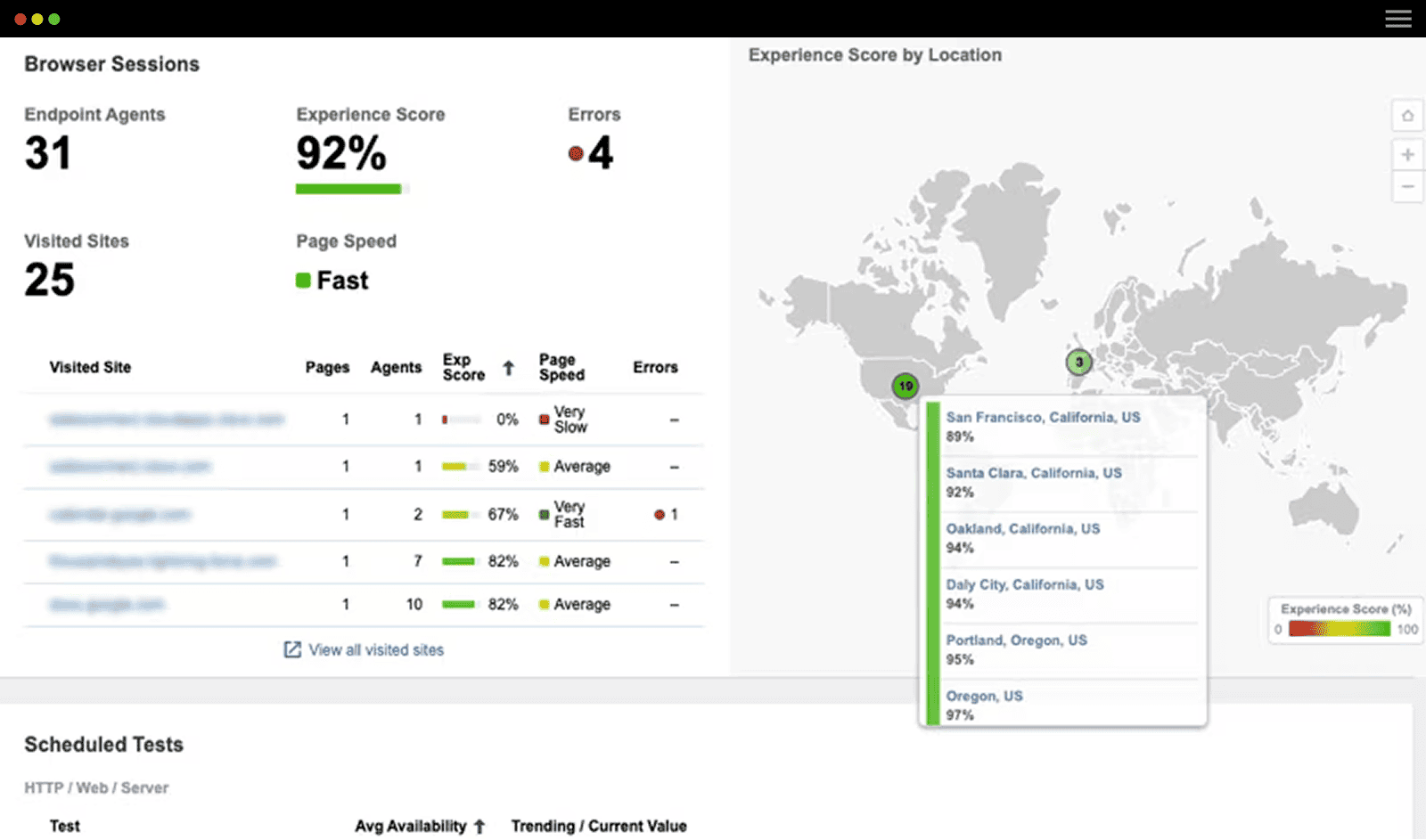

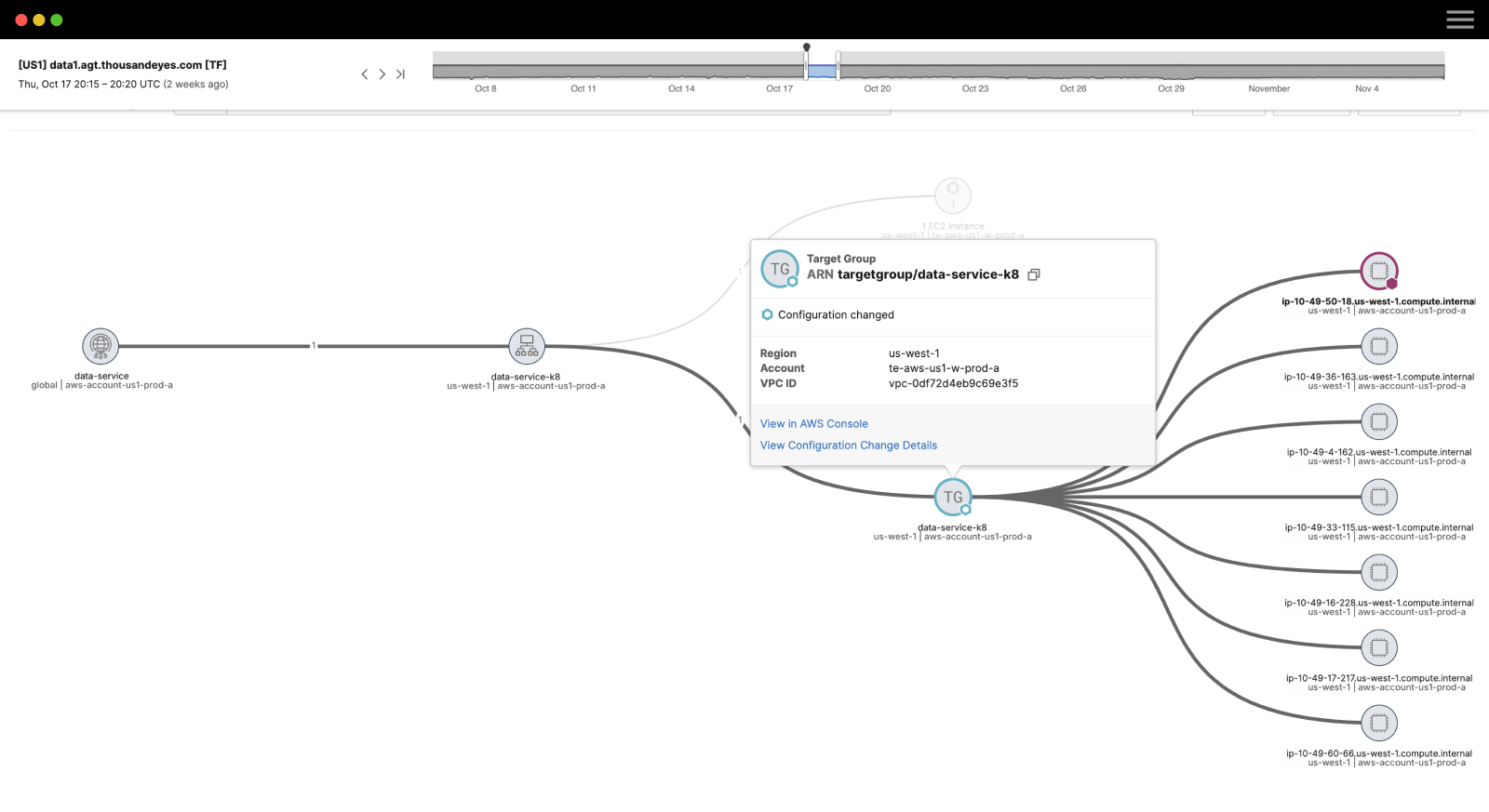

Atlassian deploys ThousandEyes Enterprise Agents across all their office locations, within their data centers, PoPs and in most of their AWS VPCs. Enterprise Agents come in a variety of form factors such as virtual appliances, Linux packages or as Docker containers, which provides Atlassian deployment flexibility. The global coverage of agents within Atlassian’s network now gives them comprehensive visibility into their MPLS backbone, Internet links, load-balancers, and connectivity into AWS VPCs.

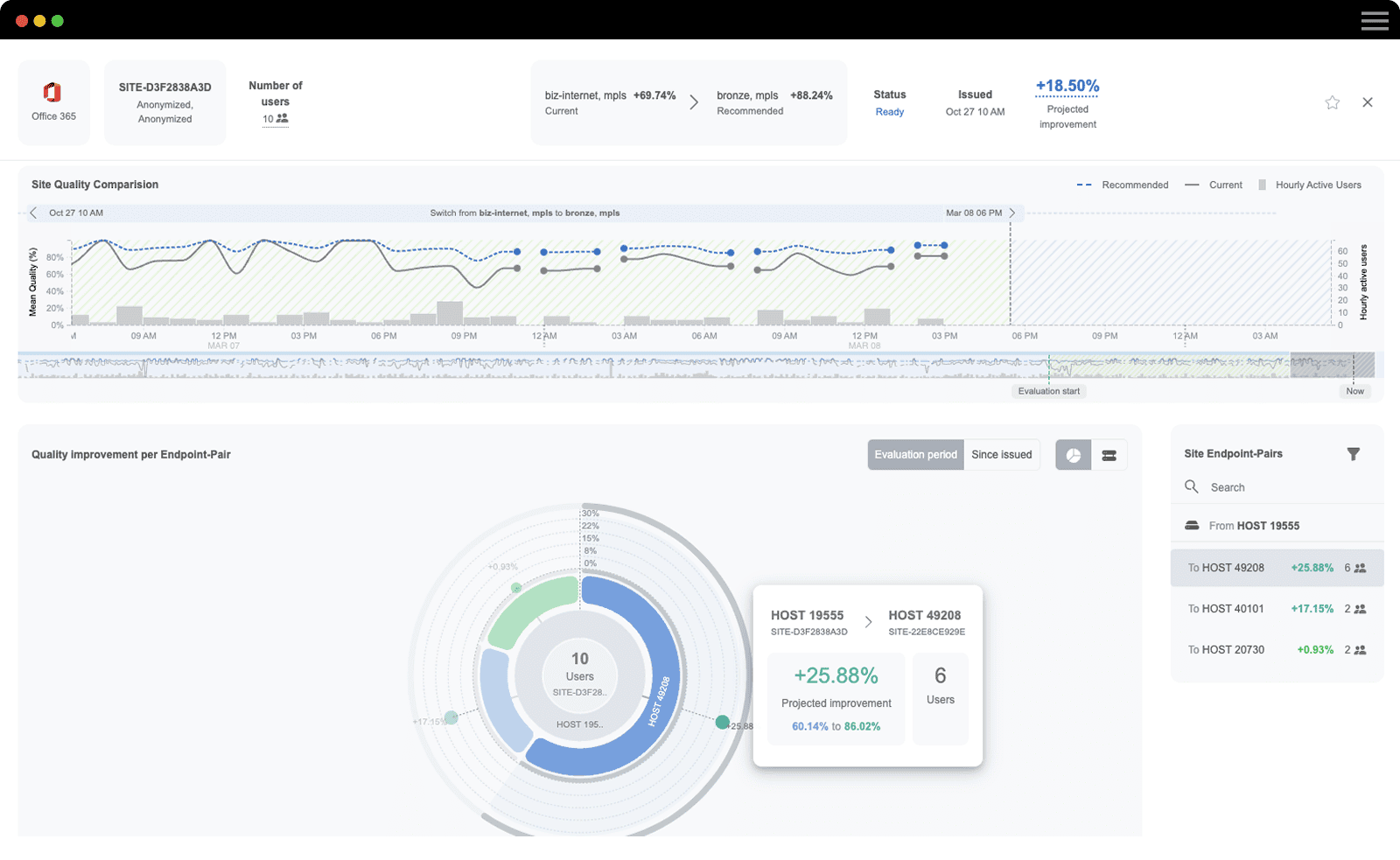

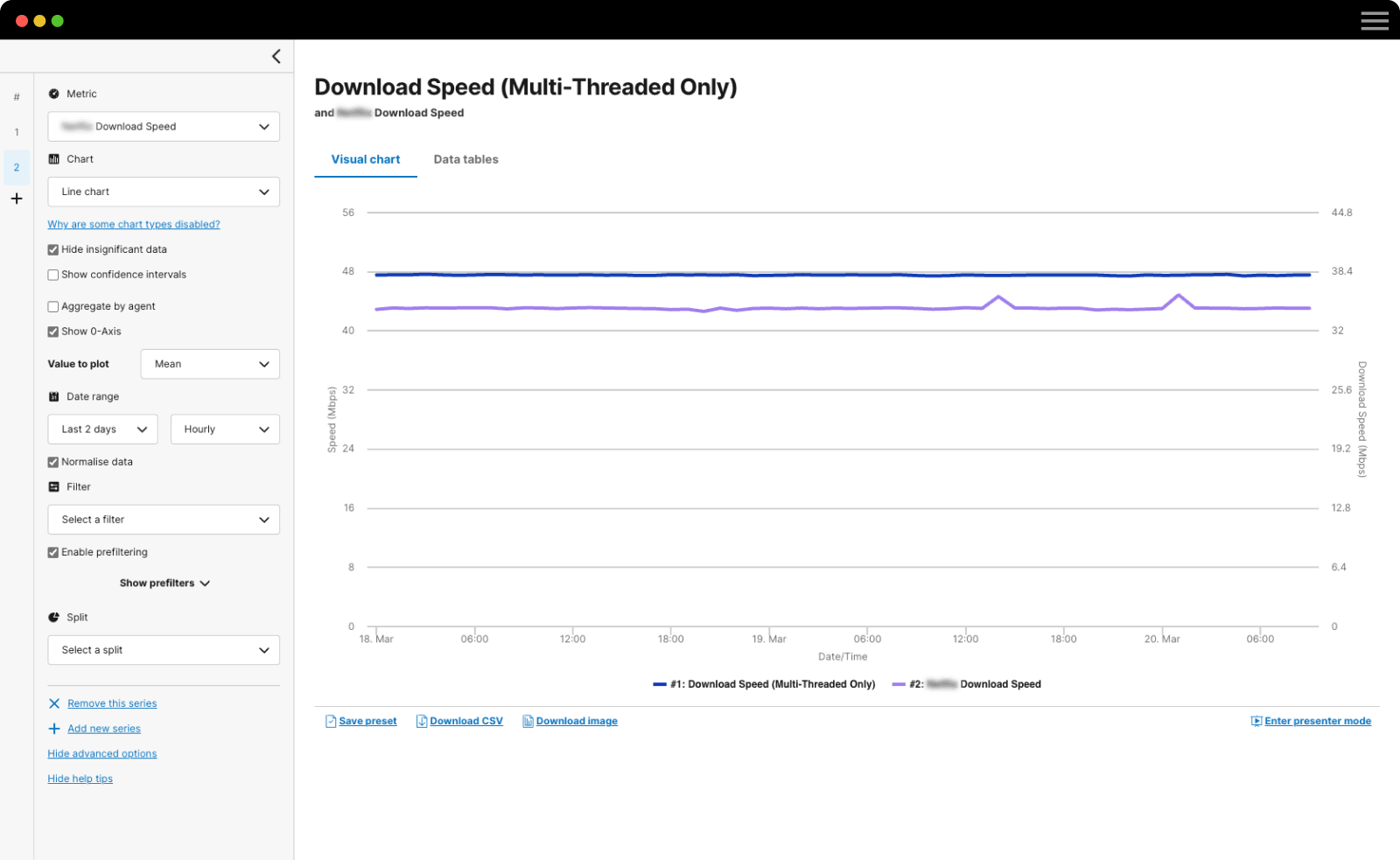

Atlassian uses ThousandEyes not just to monitor their infrastructure and services but also to plan their network architecture. Ben dove into the details, “We dropped a ThousandEyes agent into every Availability Zone (AZ) in every single AWS region, and we are now able to gather metrics like network latency between the AZ’s. This information is really important to our service teams, who write our applications to optimize latency between services.”

Ben’s team maximizes their use of ThousandEyes capabilities by also validating network design and the impact of network optimizations. For instance, after shifting to AWS’ new, global routing policy when using Direct Connect, Atlassian noticed significant latency improvements. Ben says “Using the ThousandEyes portal, we could immediately see how latency for our customers was improving. We can now share that insight with our business executives to validate that the network is indeed delivering a great return on investment!”

Atlassian leverages the ThousandEyes API to pull network metrics that can then be fed into a common correlation data processing engine, like DataDog. Ben explains, “While the ThousandEyes dashboard can aggregate the metrics quite well, as a company we standardized on DataDog, so there is no reason why you guys necessarily need to do it this way, but this is just how we do it.”

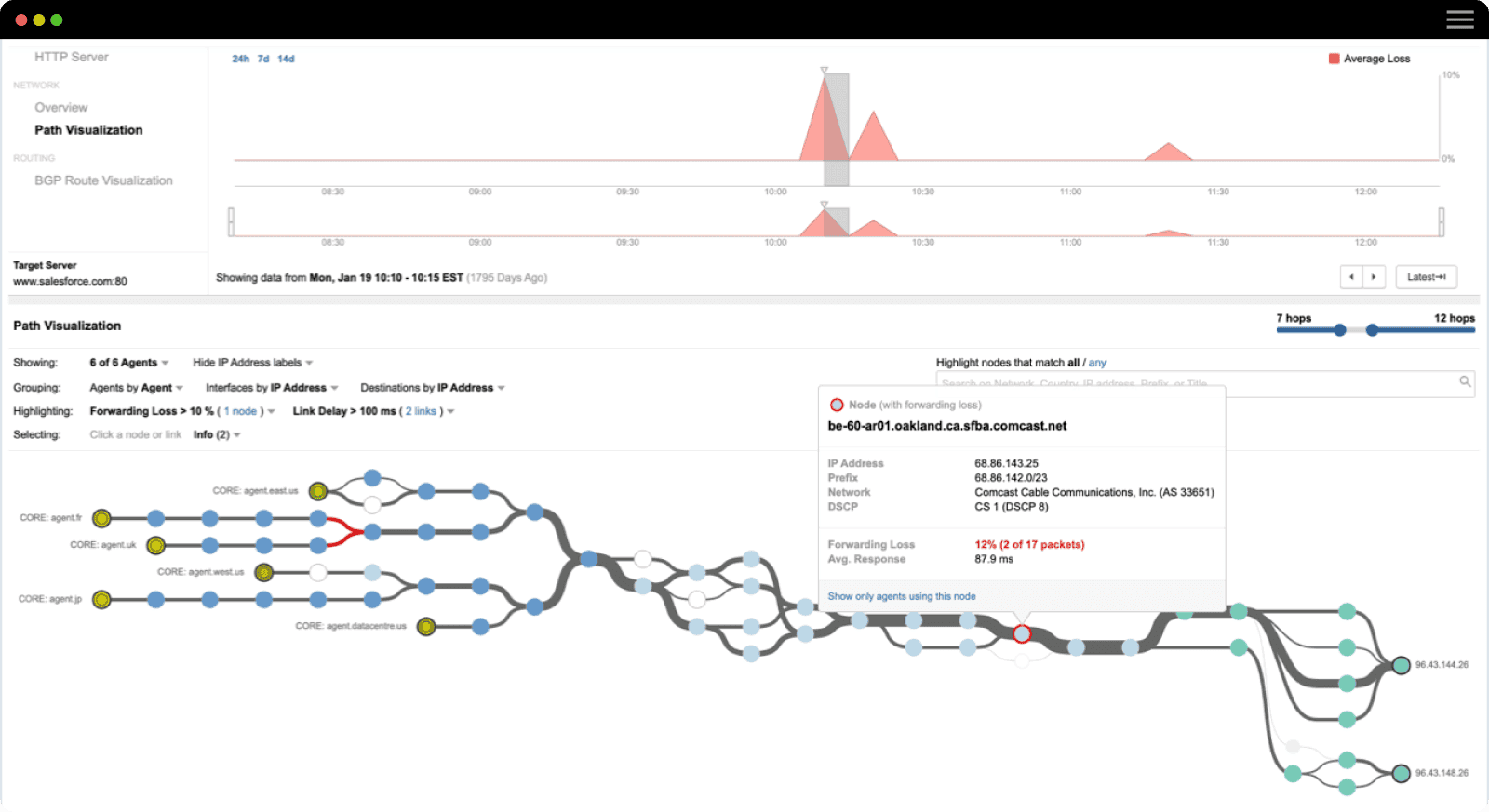

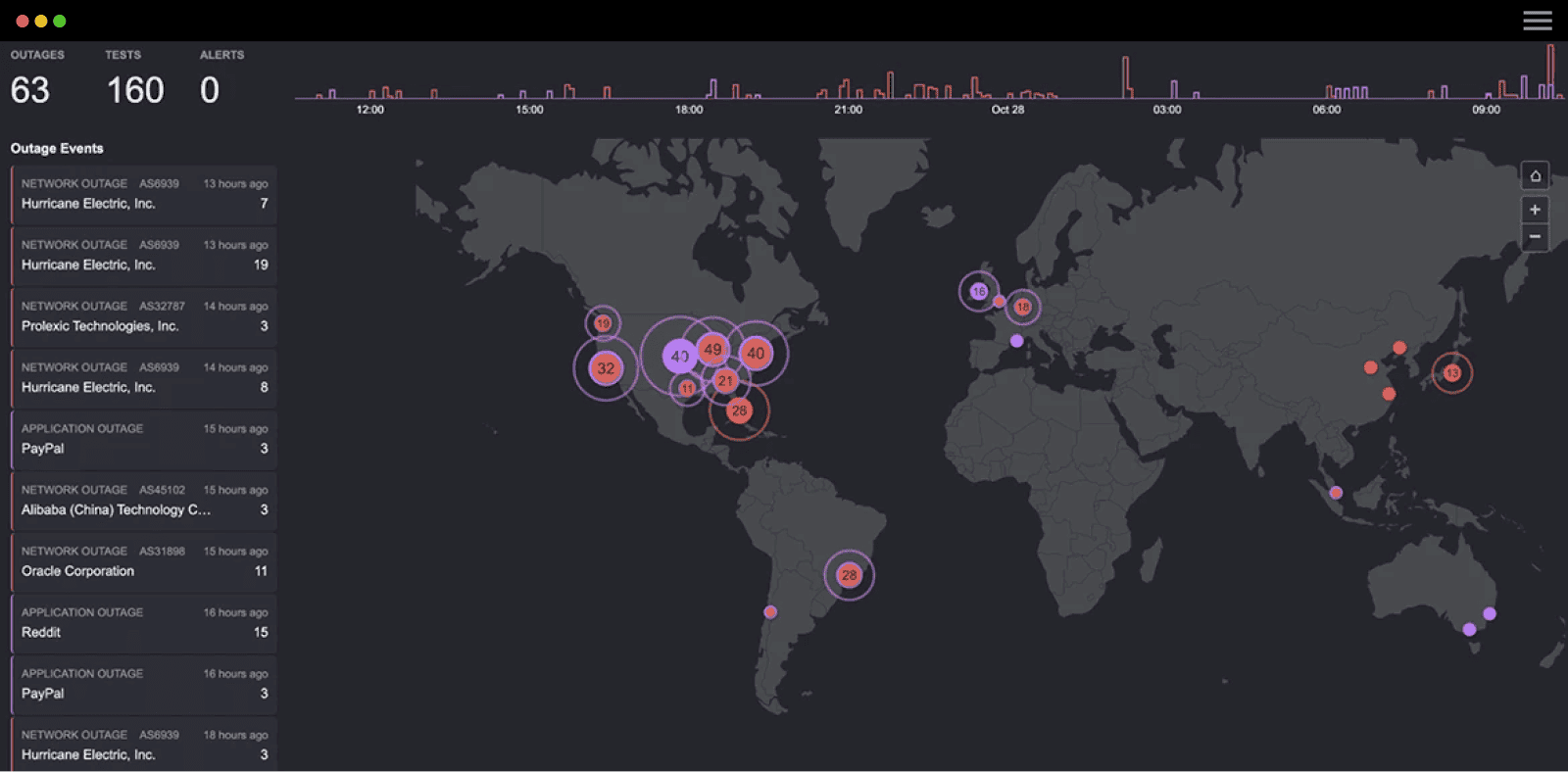

Gaining Continuous Value from Network Intelligence

Atlassian has continued to gain valuable insights from its ThousandEyes deployment. Ben shared examples ranging from identifying a software bug within AWS that impacted Bidirectional Forwarding (BFD) failover times; to detecting packet loss within the AWS backbone which was affecting Atlassian customers. ThousandEyes Path Visualization and Snapshot share features were essential to solving these problems. With Path Visualization you can quickly pinpoint where in the end-to-end network there is a packet loss or increased latency problem. The snapshot share feature allows Ben’s team to transparently escalate problems to AWS’ network team and prevent fingerpointing. Ben concluded by highlighting, “This is the nature of cloud technologies. They still are networks. They still need software updates and they are not impervious to failure.” Read more about Atlassian’s experiences in the slide share below.

If you’d like to learn more about how ThousandEyes customers are navigating through their cloud journey, read up on monitoring connectivity to AWS at Intuit and benchmarking service providers for application delivery at PayPal. If you are interested in periodic updates from us and more customer stories, subscribe to our blog and enjoy your weekly dose of ThousandEyes!