This is The Internet Report, where we analyze outages and trends across the Internet through the lens of Cisco ThousandEyes Internet and Cloud Intelligence. I’ll be here every other week, sharing the latest outage numbers and highlighting a few interesting outages. As always, you can read the full analysis below or listen to the podcast for firsthand commentary.

Internet Outages & Trends

This week, we dive into the "why" behind recent service interruptions for two major platforms: Anthropic’s AI assistant, Claude, and the social network X. While headlines often simplify these events as "outages," a closer look at the data reveals a complex web of authentication failures, inference layer issues, and application-level glitches. Join as we break down the technical nuances of these incidents and review the latest global outage trends.

Read on to learn more or use the links below to jump to the sections that most interest you:

Claude Disruptions

Claude experienced a series of disruptions in April, but as Mike Hicks points out, this isn't a simple story of a service going down. In April alone, Anthropic acknowledged three separate events on their status page: April 8th, 10th, and 13th. Crucially, each incident hit a completely different part of the technology stack.

The April 8th event was specific to the backend of Claude’s Sonnet 4.6 model. The April 10th incident involved the inference layer—the part of the system that generates AI responses—but only affected certain models. Finally, the April 13th event, which garnered the most public attention, was an authentication failure. Users couldn't log in, even though the underlying AI models were functioning as expected. These three incidents in two weeks represent three entirely different failure domains.

Analyzing the April 13th Authentication Failure

The April 13th incident serves as a prime example of a highly visible but relatively contained event. According to ThousandEyes data, reports of the incident climbed sharply around 11:45 AM EDT and returned to normal levels within an hour. While Anthropic classified it as a "major outage," this label referred to the scope of the impact (no one could log in) rather than the duration.

From a monitoring perspective, ThousandEyes observed elevated HTTP 500 error rates against Claude.ai. This indicated a backend server failure rather than a slow connection. Interestingly, the official status page acknowledgment lagged behind the actual user impact. This is common with internal monitoring built on logs and events; proactive synthetic testing often sees the failure at the same time as the user, providing a "head start" on identification.

Architectural Insights from the April 10th Inference Issue

The April 10th incident was perhaps the most technically revealing. During this event, Claude’s most powerful model, Opus, remained healthy while other models hit inference errors. This suggests that Anthropic runs separate serving paths for different model tiers.

By using distinct compute pools or backend infrastructure for Opus, Anthropic protects its most demanding (and likely most profitable) tier from failures in other parts of the environment. The trade-off is that a failure in a lower-tier path can take out those specific models while Opus keeps running. For those monitoring these services, this is a reminder that testing the "front door" isn't enough; coverage must reflect how specific models are consumed.

The Link Between Deployment Activity and Service Stability

There is a strong hypothesis that these clusters of issues are connected to deployment activity, specifically the rollout of the Sonnet 4.6 model. While Anthropic hasn't confirmed this in a post-mortem, the timing is telling. The April 8th issue was specific to Sonnet 4.6 during its active release window.

In complex distributed systems, even rigorous pre-production testing cannot anticipate every interaction once a model is running under load with real users. These services face a "velocity question": how do you balance fast updates with the reality of shifting load patterns and infrastructure changes? Mike notes that personal observations show degradations often cluster when European and US traffic overlap, suggesting that load remains a critical variable layered on top of deployment risks.

The Unique Reliability Challenges of AI Services

Reliability for AI services feels different from traditional cloud outages because it is different. Traditional web services rely on redundancy and elastic, interchangeable compute. If one region fails, you route to another.

AI inference doesn't work that way. The hardware is specialized, expensive, and capacity constrained. You cannot spin up new inference capacity as quickly as you can a standard web server. This creates a "resilience gap." Furthermore, AI services have more distinct functional layers—authentication, inference backends, and model-specific paths—each requiring a different diagnostic lens. We are moving beyond client-server architecture into a dynamic environment where the model itself is a continuously evolving dependency.

X Disruptions

In contrast to the Anthropic story, X (formerly Twitter) has seen a series of smaller disruptions rather than one major event. In a two-week window, incidents occurred on April 2nd, 7th, and 14th. These were degradations rather than total blackouts.

The April 2nd event affected global feeds, the April 7th event saw broader degradation across the US, Europe, and Asia, and the April 14th event was localized to France and Germany. When disruptions cluster this frequently across different geographies and severities, it suggests an underlying instability in the operational environment or the way different layers of the stack interact.

Debunking the Cloudflare Attribution Myth

During the April 2nd incident at X, many reports incorrectly blamed Cloudflare. However, ThousandEyes data showed that Cloudflare’s infrastructure was operational. If a major CDN like Cloudflare fails, we expect to see multiple services go down simultaneously. In this case, the impact was isolated to X.

The data showed that DNS was resolving and routing paths were intact, meaning the failure was at X’s application layer. The "Cloudflare attribution" is often a result of familiarity bias—users name the technology they associate with the platform. This misdiagnosis is dangerous; if you think it’s a CDN issue, you call a third party, but if it’s an application error, you need to look at your own recent deployments or config changes.

What X’s Failure Signatures Reveal About Its Backend

The variation across X’s three incidents is telling. Because the failures look different each time—content serving issues one day, localized connectivity another—it suggests that the problems aren't coming from one single broken component.

Instead, these failures emerge from the complex interactions between different layers of the stack. A platform of this scale is a "living system" where the application might behave in a way that stresses the network, or vice versa. Understanding this interaction model is a critical step in moving from simply knowing "something is wrong" to identifying the specific fault domain.

By the Numbers

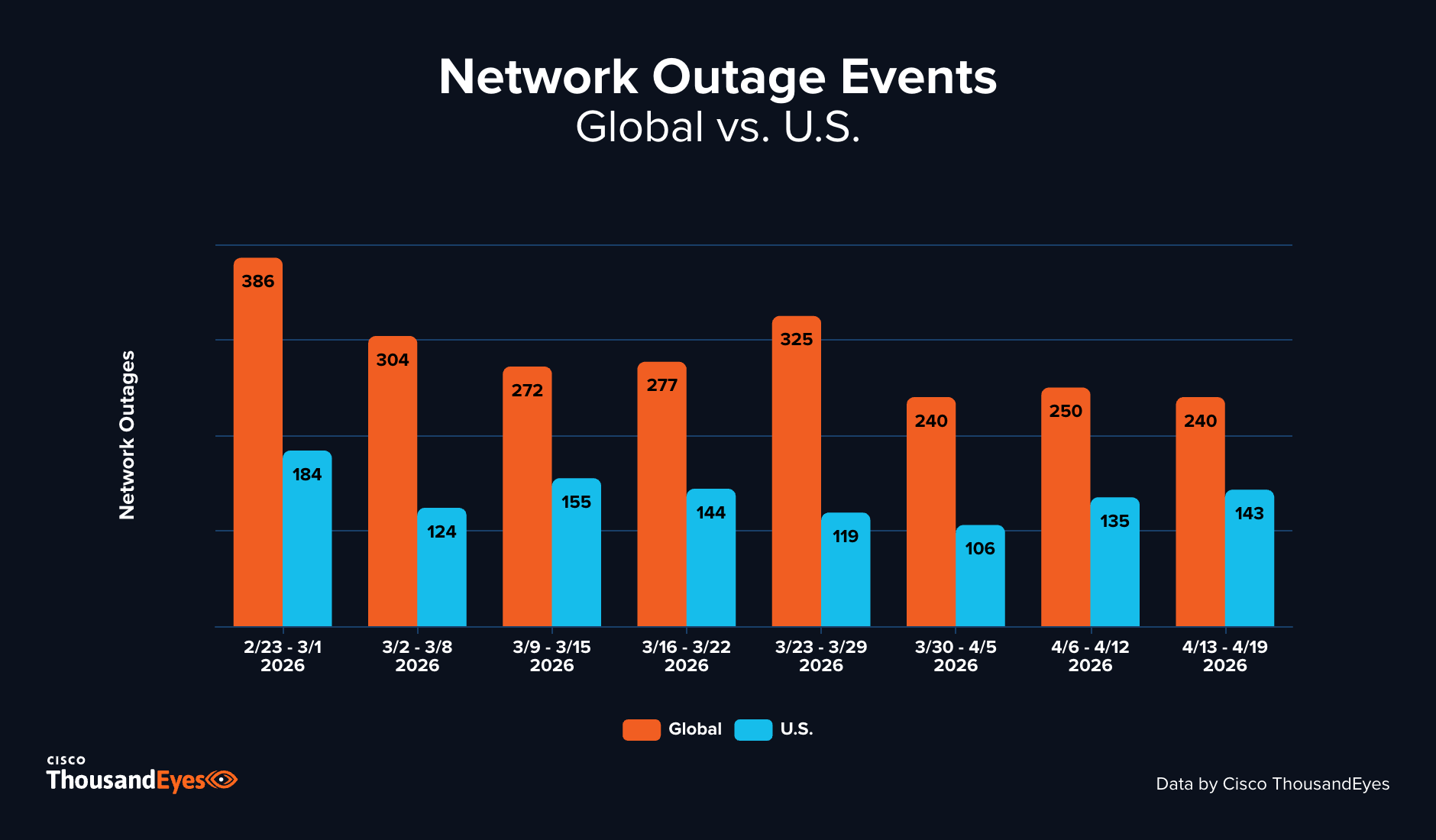

Let’s close by taking our usual look at some of the global trends that ThousandEyes observed across ISPs, cloud service provider networks, collaboration app networks, and edge networks over recent weeks,April 6th to April 19th. Globally, the environment was stable, with outages fluctuating in a narrow band between 240 and 250 per week.

However, the US told a different story. US outages climbed 27% in the first week and another 6% in the second. During this period, the US accounted for 56% of all global outages, well above the typical 45%. While the global network environment appeared settled, the sustained climb in the US is a trend worth watching as we move further into the month.

Global Outages

-

From April 6–12, ThousandEyes observed 250 global outages, representing a 4% increase from 240 the prior week (March 30–April 5). The following week of April 13–19 saw outages decline 4%, falling back to 240.

-

The two-week period was characterized by relative stability, with outages fluctuating minimally around the 240–250 range. This continued the pattern established in late March, suggesting network operations may have settled into a consistent operational rhythm heading into late April.

U.S. Outages

-

The United States saw outages surge to 135 during the week of April 6–12, representing a 27% increase from the previous week's 106. This marked a sharp reversal from the declining trend observed in late March.

-

During the week of April 13–19, U.S. outages increased a further 6%, rising to 143. This two-week climb brought U.S. activity back to levels more consistent with mid-March, erasing the declines seen during the March 30–April 5 period.

-

Over the two-week period from April 6–19, the United States accounted for approximately 56% of all observed network outages, representing a notably higher proportion than the roughly 45% seen in the preceding weeks.