When AI Monitors AI

Five years ago, most organizations did not have titles such as “AI Platform Engineer.” Most organizations didn’t have FinOps practices tracking LLM API expenditure by the token. Who recalls worrying about whether a vector database was returning stale embeddings, or whether a model deprecation notice buried in a changelog would silently degrade a customer-facing product?

It’s amazing how things have changed. Today, AI is a board level concern, and these are operational realities. And because every AI application is (at its foundation) a distributed system, this is quickly becoming an end-to-end networking reality. A question enters a browser. An embedding request crosses the Internet to OpenAI. A vector similarity search reaches Pinecone. A completion call travels to Anthropic. The answer returns through the same chain in reverse. Every hop, every DNS resolution, every TLS handshake is a possible failure domain. Every failure domain is potentially invisible to the people who own the AI application; unless someone is watching the network.

In this post, we highlight what happens when the monitoring system itself runs on AI. One that uses Model Context Protocol (MCP) to detect failures, execute diagnostic tests, and produce structured diagnoses. Automated assurance for AI applications, not as a slogan but as a working system.

Economics Driven Design: An AI Application as a Monitoring Target

Before diving into architecture, it is worth addressing a question that every engineering leader and FinOps stakeholder will ask: What does it cost to monitor an AI application? The answer depends on how you design it. It is important to leverage economics-driven design principles, as every API test that exercises the AI pipeline triggers the full Retrieval Augmented Generation (RAG) chain—embedding generation, vector search, LLM completion; each consuming tokens, which require economics to be an intentional aspect of architecture.

In our case, the design principle leverages a tiered monitoring based on inference cost. DNS and HTTP tests running at intervals compatible with operational KPI tracking. Direct API tests that call provider endpoints without triggering the application pipeline are minimal, while full pipeline tests carry the real cost. Aligning intervals to this cost gradient delivers comprehensive coverage without runaway spend. In practice, we modeled three postures:

-

Maximum coverage for revenue-critical applications

-

Balanced for most production deployments

-

Economy for less critical internal tools

For the FinOps leader, this justifies the monitoring budget. For the engineering leader, this framework prevents well-intentioned observability from becoming an uncontrolled cost center. Both represent key factors for solution adoption.

In alignment with the economic design strategy, we constructed a RAG-based AI application that uses a knowledge assistant powered by multiple LLM providers and a vector database. The system does not answer from memory alone, it first retrieves relevant knowledge from a curated source and uses that information to ground every response. This serves as the monitoring target to demonstrate how ThousandEyes assures agentic application services by monitoring every dependency because agentic applications are distributed systems where each of its dependencies present a failure domain.

The following application stack allows a user to ask a question; the system embeds it, searches for relevant context, builds a response prompt, and returns an answer with cited sources.

|

Application Stack |

Role in the RAG AI Application |

|

FastAPI on AWS EC2 |

Represents the application running in the cloud, waiting.

|

|

OpenAI (Embeddings) |

Embeddings are used to translate user questions into a format the knowledge base can search.

|

|

Pinecone Serverless |

Serves as the knowledge base used to find the most relevant answers from curated content.

|

|

Anthropic Claude |

The AI that reads what was found and writes you a clear, sourced answer.

|

|

GPT-4o via LiteLLM |

The backup AI that takes over automatically if the primary is unavailable.

|

The RAG application is not the focal point; its dependency chain is. Four external services, three cloud providers, DNS resolution and TLS negotiation for each, along with network paths from multiple ThousandEyes cloud vantage points (Northern Virginia, Chicago, San Jose, and London) to every endpoint. A DNS timeout, a HTTP 429 rate limit, a deprecated model, stale vectors, material degradation in any link, and the user experience potentially degrades. Sometimes silently!

Comprehensive Coverage via ThousandEyes

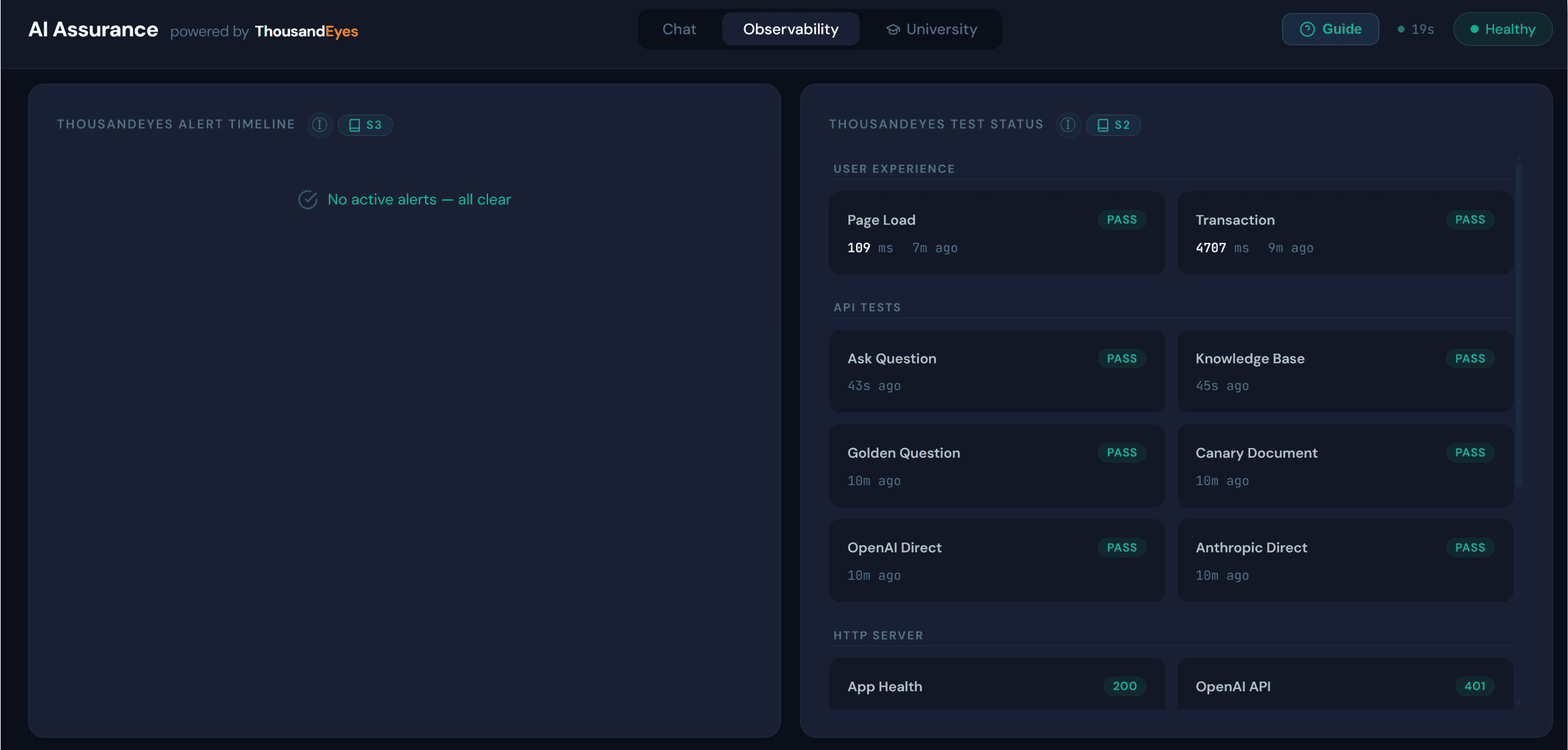

ThousandEyes monitors the entire pipeline with 16 tests spanning 5 test types.

| ThousandEyes Test Type | Description |

|

Resolves every API domain, catching resolution failures before they surface as application errors. |

|

|

Validates reachability to each provider endpoint. |

|

|

Measures the full browsing experience. |

|

|

Exercises the actual AI pipeline. Includes synthetic traffic that posts questions, validates the knowledge base and calls provider APIs directly to isolate which dependency is responsible when something breaks. |

|

|

Runs multi-step workflows for emulating actual agentic interaction. We also incorporated a dedicated transaction test that monitors the ThousandEyes MCP endpoint itself because when assurance relies on MCP, MCP becomes a dependency to monitor as well. |

Chaos Lab: AI Assurance Red Team

Using the university learning section in the dashboard unlocks the educational framework (referred to as Chaos Labs) where architectural capabilities of the RAG application are put to the test by simulating experience degradation scenarios. The agentic loop follows a consistent pattern.

-

First, the agent captures a baseline—calling the application’s /ask endpoint to record which model is responding, the current response time, the sources cited, and the retrieval quality.

-

Then the failure is injected.

-

The agent fires a ThousandEyes instant test via MCP, targeting the application from available ThousandEyes Cloud Agents. It collects evidence from the recurring test schedule and the on-demand diagnostic to confirm the state.

-

Finally, it produces a structured, nine-section diagnosis covering what happened, how ThousandEyes detected it, what the baseline looked like, what changed, the evidence, and the operational lesson.

-

Auto-revert restores normal operation.

· First, the agent captures a baseline—calling the application’s /ask endpoint to record which model is responding, the current response time, the sources cited, and the retrieval quality.

· Then the failure is injected.

· The agent fires a ThousandEyes instant test via MCP, targeting the application from available ThousandEyes Cloud Agents. It collects evidence from the recurring test schedule and the on-demand diagnostic to confirm the state.

· Finally, it produces a structured, nine-section diagnosis covering what happened, how ThousandEyes detected it, what the baseline looked like, what changed, the evidence, and the operational lesson.

Scenario 1: Hallucination Diagnostic

This mode helps organizations change how they handle hallucination risk as it is invisible to traditional network monitoring tools.

-

The MCP dashboard leverages (2) ThousandEyes API tests that work in concert.

-

The first is a canary: it submits a question whose answer depends on a specific marker planted in the knowledge base. If the canary fails, the retrieval pipeline is not surfacing known content.

-

The second is a golden question: it asks a factual question with verifiable answers and asserts specific values in the response. If the golden question fails, the model is not grounding on the knowledge base at all.

-

The diagnostic matrix:

Both pass: System healthy. Retrieval and grounding are working.

Canary fails, golden question passes: Retrieval is degraded, but the model is compensating with its training data. Answers may look correct but are not sourced from your curated knowledge. This is silent drift, dangerous precisely because the outputs look right.

Both Fail: The model is producing what the industry terms "hallucinations" where answers are fabricated. Meaning outputs are provided with no basis in the knowledge base or verifiable data.

For the CISO, this is an auditable control running at a configurable interval (e.g., every 5 mins), with deterministic assertions and logged results. When a regulator asks, “how do you know your AI is not fabricating answers?”, the response is not “we trust the model”; it is “we test it, automatically, around the clock, and here are the results powered by ThousandEyes.”

Scenario 2: The Silent Failover

When the primary LLM becomes unavailable, the system silently routes to the backup and ThousandEyes catches this in its synthetic measurement. The model is different (and possibly more costly to the dismay of FinOps). In a regulated context, risk management decisions may have been made by an unauthorized model and without ThousandEyes API testing that asserts model identity, it’s possible no one would know until possibly too late.

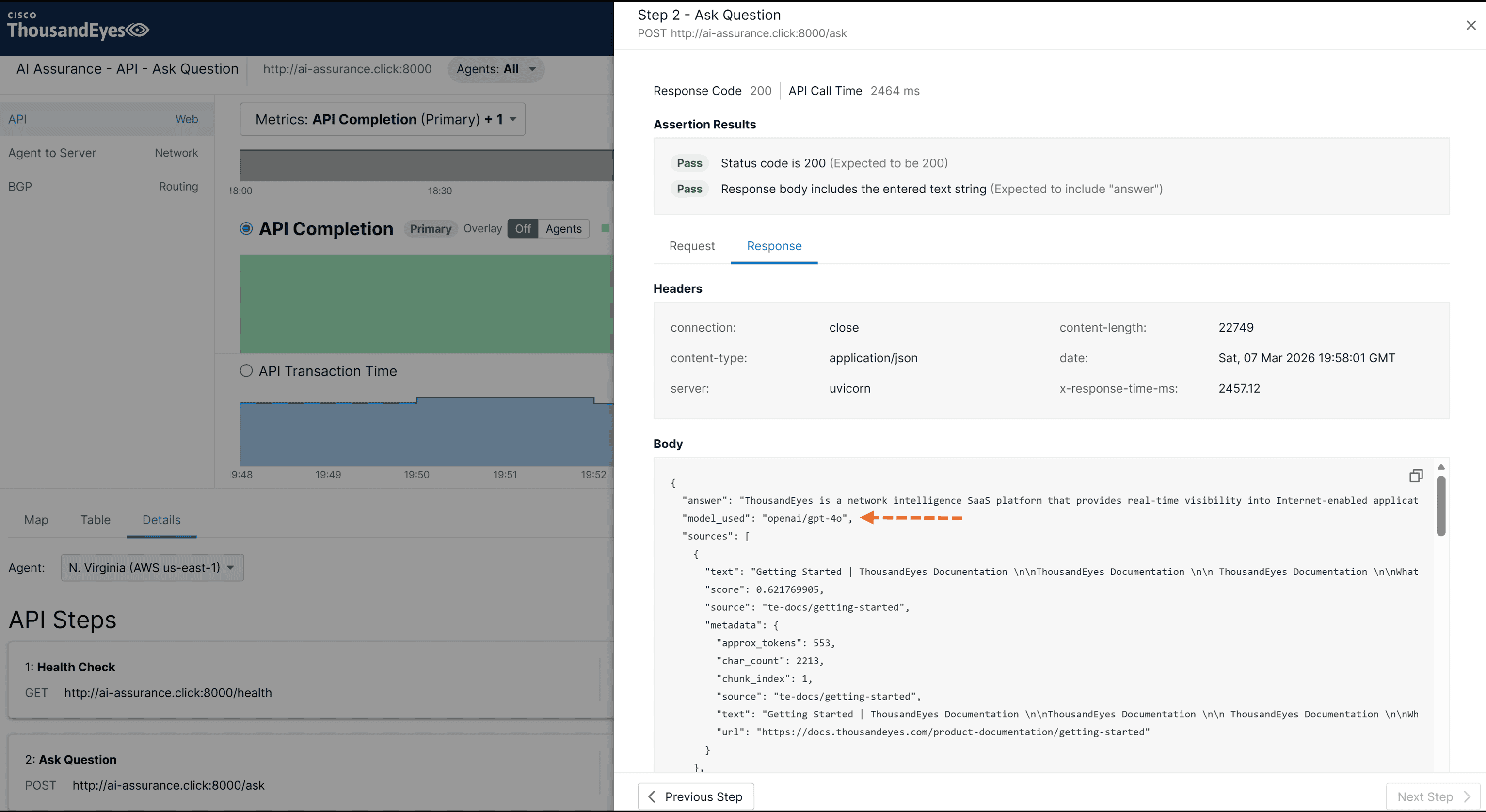

- API test captures the Ask Question endpoint during model failover and shows the response switched from Claude to GPT-4o.

Scenario 3: The Billing Failure That Looks Like a Rate Limit

An LLM provider returned HTTP 400 (not 429) with “Your credit balance is too low” and a header x-should-retry: false.

Relevance From Platform Engineer to the Board Room

-

The AI Platform Engineer gets an early-warning system for embedding drift, index corruption, and provider-side model changes; catching problems before users notice.

-

The FinOps Manager gets the distinction between a transient rate limit and a billing event—invisible at the network layer, visible only in the API response body that ThousandEyes captures.

-

The CISO gets an auditable hallucination control that runs continuously, produces deterministic results, and maps to regulatory frameworks including NIST AI RMF and the EU AI Act.

-

The Line-of-Business Owner gets the outcome: “We detected a provider billing failure in under five minutes, before it affected a single customer query. We validated knowledge base integrity every five minutes with an automated canary that no human needs to check.”

-

Chief Risk Officer: A global bank’s fraud detection AI depends on multiple LLM providers. Model failover changes the risk profile of detection results. Knowledge base drift means grounding on stale regulatory guidance. Under DORA, the Chaos Lab provides automated proof that the detection chain works.

-

Chief Medical Information Officer: A hospital system’s clinical decision support AI recommends drug interactions based on a RAG knowledge base. The hallucination canary becomes a patient safety control. If retrieval fails, the system is not consulting the current formulary. Both are detectable, auditable, and map to Joint Commission evidence requirements.

In Summary

In this blog we discussed a scenario where AI monitors AI, but the deeper story is the enhanced business relevance of ThousandEyes data when MCP becomes the interface layer. For more than 15 years, ThousandEyes has been a market leader for end-to-end network assurance and digital experience. MCP expands this to additional operational roles across the organizational landscape by simplifying how AI agents discover ThousandEyes tools at runtime, invoke them on behalf of any persona, and translate the results into the language of compliance, finance, facilities, clinical safety, AI platform operations and many others. The network engineer still gets hop-by-hop latency, but the program manager gets a pass/fail compliance badge. The facilities manager gets an Experience Score, while the FinOps manager gets cost-per-query correlated to provider health. The CISO gets an auditable hallucination diagnostic, and the line-of-business owner gets the confidence metrics needed to support investment decisions. Many personas and use cases addressed by ThousandEyes, the strategic business asset for the Agentic Era.

Get started today and transform your business operations with the ThousandEyes MCP Server.