Your AI model can be perfectly healthy and still deliver a broken experience. In modern enterprise AI systems, reliability failures increasingly have nothing to do with the model itself—and everything to do with the context it depends on.

Enterprise AI systems have evolved rapidly. What started as simple prompt-driven interactions with large language models has grown into something far more complex and far more operationally sensitive.

Understanding and monitoring this shift is becoming essential for delivering trustworthy AI experiences at scale.

From prompts to context graphs: how AI systems evolved

Early AI deployments were relatively straightforward. A user provided a prompt, the model generated a response, and observability focused almost entirely on inference behavior—latency, errors, and output quality.

As AI moved closer to bona fide business use, its architecture evolved.

Prompt-based language models relied on static input and largely self-contained reasoning.

As expectations grew, Model Context Protocol (an emerging standard) introduced a consistent way for AI systems to interact with external platforms, services, and data sources. This made it possible for models to reach beyond their original input. Agentic systems then built on that foundation, enabling AI to take multiple steps, call APIs, and combine information from several systems before responding.

Each of these shifts expanded what counted as part of the AI system.

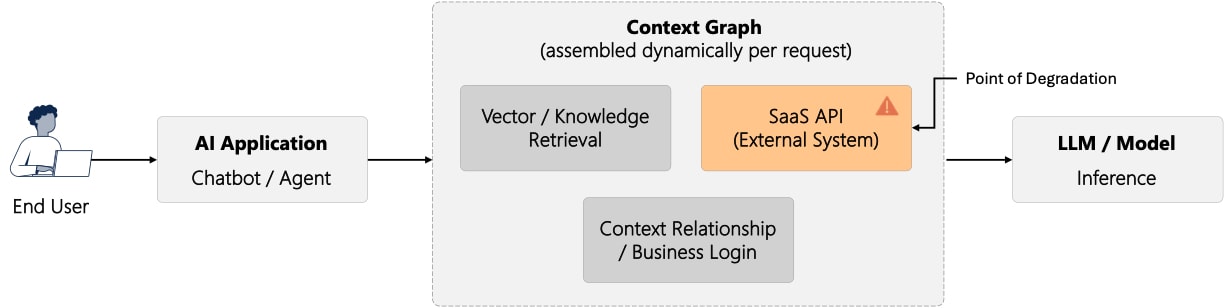

Today, many AI implementations rely on what can best be described as context graphs. These are dynamic collections of data sources, services, and relationships that are assembled in real time before a model generates a response. They often include SaaS platforms, internal systems, APIs, and knowledge sources that live across different clouds and regions.

At this stage, intelligence is no longer produced by the model in isolation. It is shaped by the context the model is given.

What is a context graph?

A context graph represents the set of dependencies and relationships an AI system relies on to reason and respond. Each node contributes information that shapes the model’s output. For example, an enterprise AI assistant answering a customer question may retrieve account data from an internal system, product documentation from a SaaS knowledge base, and real-time status from an external API. Together, these dependencies form the context graph that shapes the model’s response.

Context graphs share three defining characteristics:

-

They are distributed across networks, clouds, and third-party services

-

They are dynamic, changing per user, request, or moment in time

-

They fail quietly, often without causing application errors

When a node in the graph becomes slow, unavailable, or inconsistent, the AI system does not necessarily fail outright. Instead, responses degrade subtly—becoming slower, less complete, or less relevant.

This is where many modern AI reliability issues originate.

Why monitoring context graph nodes matters

Most efforts to observe AI systems focus on the model itself. Metrics such as inference latency, token usage, hallucinations, and prompt quality remain important, but they no longer tell the full story. A healthy model, on its own, does not guarantee a reliable AI system.

In a context-driven AI system, the model can be healthy. The application can return a successful HTTP response. And yet the experience for users can still degrade.

From a business perspective, these silent failures are the most damaging. Users lose confidence, and engineering or operations teams struggle to explain what changed because nothing appears broken on the surface.

Reliable AI systems are built in layers. Techniques such as retry logic, circuit breakers, caching, and fallback responses help absorb failures at runtime. Observability of the context graph is what makes those failures understandable by showing when context degrades, which dependency is responsible, and how the user experience is affected.

Monitoring the nodes of the context graph—their reachability, latency, and consistency—bridges this gap. It makes AI reliability observable in the same way users experience it: end-to-end.

Observing context graphs in action

Consider an AI workflow that pulls information from several external sources before generating a response. Each of these sources acts as a node in the context graph. Together, they form the information layer the model depends on at decision time. The diagram below shows how user requests move through this context graph before reaching the model and shaping the user experience.

This layout highlights a critical point. The model sits downstream of the context graph. Changes in the behavior of any context node can affect the AI experience, even when the model itself continues to operate normally. Reliability, in this sense, is determined less by inference health and more by how consistently context is delivered at decision time.

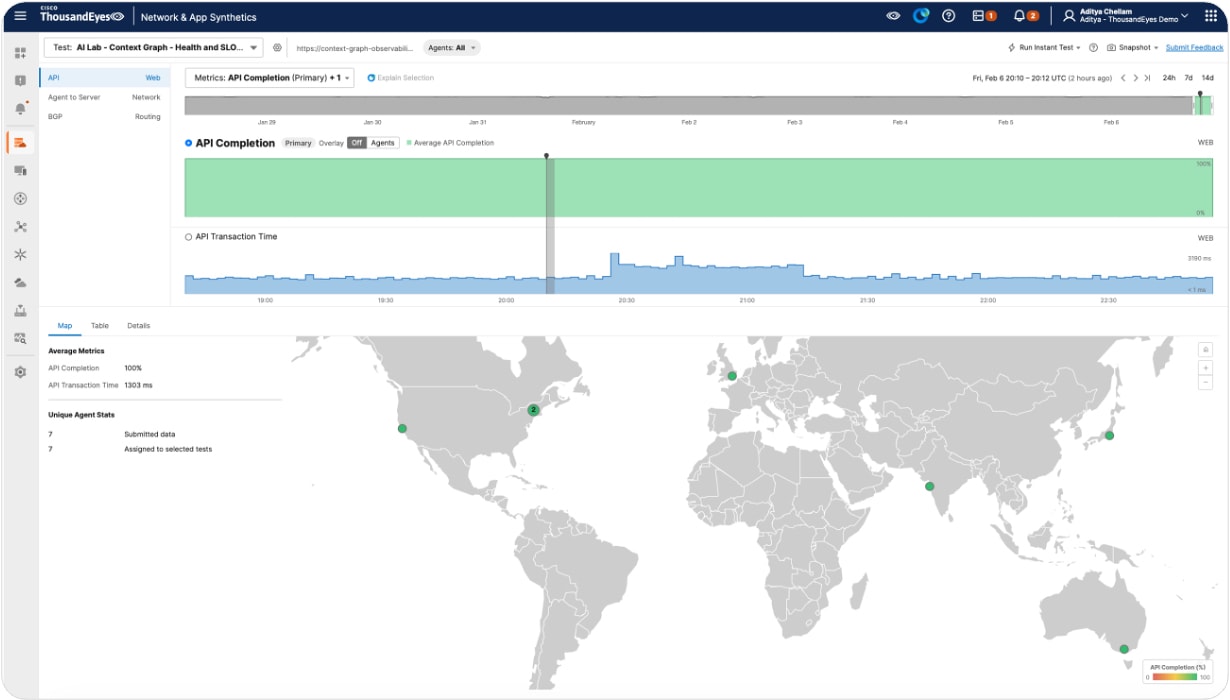

To make context graphs observable, each dependency is monitored as an external service interaction, reflecting how the AI system actually consumes it. In ThousandEyes, this is typically done using multi-step API or transaction tests that measure API completion, reachability, latency, and response behavior over time. Each API, SaaS platform, or internal service becomes a measurable node, allowing performance changes to be correlated directly with user experience rather than inferred from model behavior.

When observed over time, the impact of context degradation becomes clear. API completion time may remain stable for long periods, then briefly spike before returning to baseline, while availability stays at 100 percent and no application errors are recorded. From the user’s perspective, the system still functions, but responses feel slower or less reliable. This pattern reveals how subtle shifts in context delivery can meaningfully degrade AI experiences without triggering traditional failure signals. When the system is observed over time, the first signs of an issue appear in the user experience.

In the ThousandEyes view below, API completion time remains steady for an extended period, then briefly spikes before returning to baseline. Availability stays at 100 percent, and no application errors are recorded. From a user’s perspective, the system still functions, but performance degrades in a way that is immediately noticeable.

This pattern is common in production AI systems. For example, one enterprise AI assistant experienced sporadic slow responses during peak business hours. The model itself remained stable, but a third-party knowledge API intermittently slowed down due to regional congestion, subtly degrading response quality without triggering any alerts.

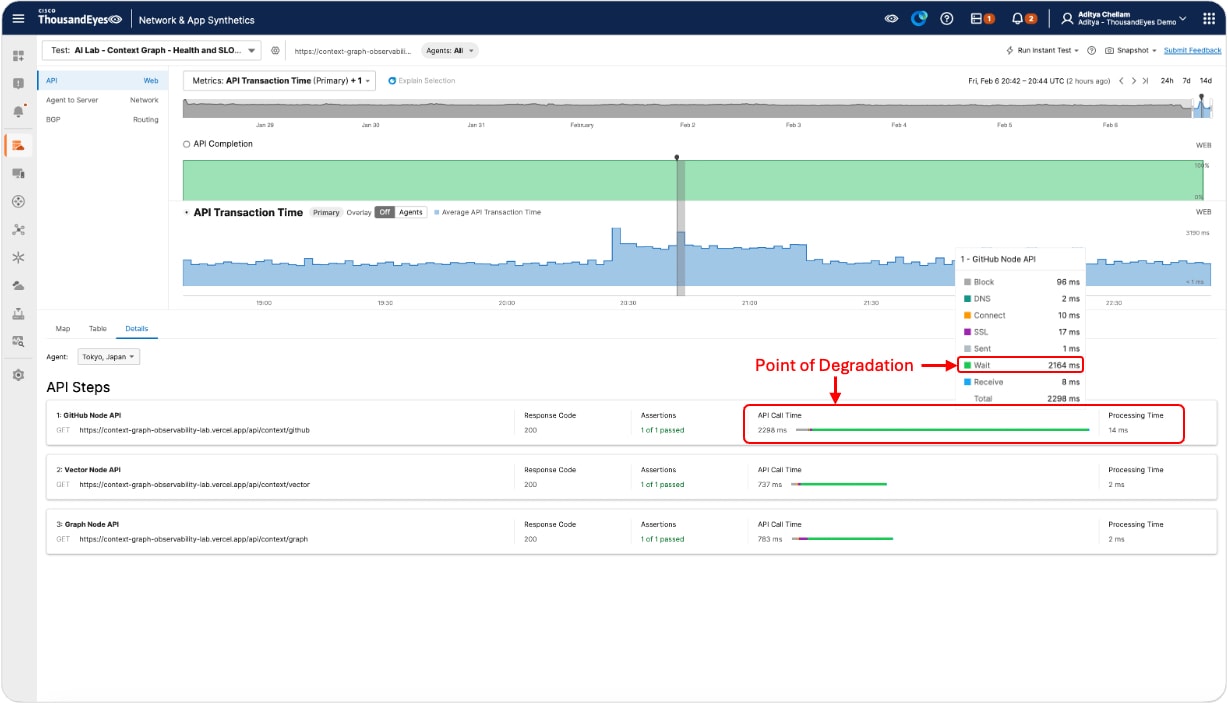

The timeline shows that something has changed, but not where the degradation originates. The next view breaks the context graph into individual API dependencies, making it possible to see which call is responsible.

In this breakdown, one dependency stands out. The GitHub API shows a sharp increase in response time. Under normal conditions, this node responds in the range of 50 to 200 milliseconds. During the period shown, response times rise to approximately 2,200 milliseconds, while all other context nodes remain healthy.

This node-level visibility allows teams to move quickly from symptom to cause. Instead of questioning model behavior or application logic, attention can be directed to the specific dependency affecting context delivery.

Crucially, this insight is gained without inspecting prompts, tokens, or model internals. By observing the context graph from the outside, teams can understand why the AI experience changed and where to act, even when the model itself shows no signs of failure.

Rather than focusing on what happens inside the model, the most useful signals are operational:

-

How quickly does each context source respond?

-

Does behavior change over time or across regions?

-

Does a single degraded dependency affect the overall experience?

Viewed through this lens, a clear pattern emerges. When one context node slows down, end-to-end API transaction time increases immediately. When the node recovers, performance returns to baseline. There is no outage, but the user experience changes in a meaningful way.

This clarity makes it possible to distinguish between model issues, application issues, and problems rooted in context dependencies, which is essential for operating AI systems with confidence at scale.

The business value of context graph assurance

As organizations move AI from experimentation into production, reliability becomes a matter of assurance rather than innovation.

Silent degradation in context undermines trust. Customer interactions start to feel inconsistent. AI-driven decisions become harder to explain. Organizations hesitate to scale AI usage because behavior feels unpredictable.

Monitoring context graphs helps address these risks. It allows teams to detect experience degradation before users report it, explain AI behavior in operational terms, maintain consistency across regions, and build confidence in AI-driven workflows.

In practice, this is what enables AI systems to be depended on—not just demonstrated.

Conclusion: from AI intelligence to AI assurance

AI systems have evolved from isolated models into distributed, context-driven experiences. As that evolution continues, reliability is no longer defined solely by model performance. It is shaped by the health of the networks, services, and external systems that supply context at runtime.

Context graphs make this dependency explicit. They show that AI behavior is influenced as much by Internet paths, SaaS availability, and regional performance as by the model itself. When those dependencies degrade, AI experiences degrade - even when inference metrics remain green.

This is where outside-in visibility becomes essential. Observing AI systems from the perspective of users, across the Internet and third-party services they rely on, allows organizations to move from reactive troubleshooting to proactive assurance.

As AI adoption accelerates, trust will depend less on how advanced models are, and more on how confidently organizations can ensure that context is delivered reliably, consistently, and globally. In this environment, monitoring context graphs is not just an observability concern; it becomes a prerequisite for operating dependable AI at scale.