This is The Internet Report, where we analyze outages and trends across the Internet through the lens of Cisco ThousandEyes Internet and Cloud Intelligence. I’ll be here every other week, sharing the latest outage numbers and highlighting a few interesting outages. As always, you can read the full analysis below or listen to the podcast for firsthand commentary.

Internet Outages & Trends

Cloud services fail. That is not a controversial statement. What is less well understood is how they fail, and what the pattern of a failure can tell you about where the problem is. In this episode we look at two recent disruptions, at Cloudflare and Salesforce, and what the telemetry behind each one reveals. In both cases, the failure was more specific and readable than the public narrative suggested. Also in both cases, the most interesting part of the story is not that something broke, but how the combination of conditions that caused it came together.

Read on to learn more or use the links below to jump to the sections that most interest you:

Cloudflare Outage

On February 20, Cloudflare experienced a service disruption affecting customers using its Bring Your Own IP (BYOIP) service. BYOIP allows customers to use their own IP address ranges while leveraging Cloudflare's global network, with Cloudflare originating and advertising those prefixes on the customer's behalf. Because those IP ranges appear from the outside world to belong to the customer rather than to Cloudflare, affected organizations would not necessarily have immediately identified Cloudflare as the source of the problem—a point we return to below.

Automated Scale Gone Awry

According to Cloudflare's published post-mortem, the company had deployed an automated task designed to clean up BYOIP prefix configurations that were pending deletion. The task contained a bug that caused it to interpret an empty filter as meaning “delete everything” rather than “delete only the targeted entries.” What followed was the automated removal of, effectively, the entire active BYOIP prefix table, before the process was stopped.

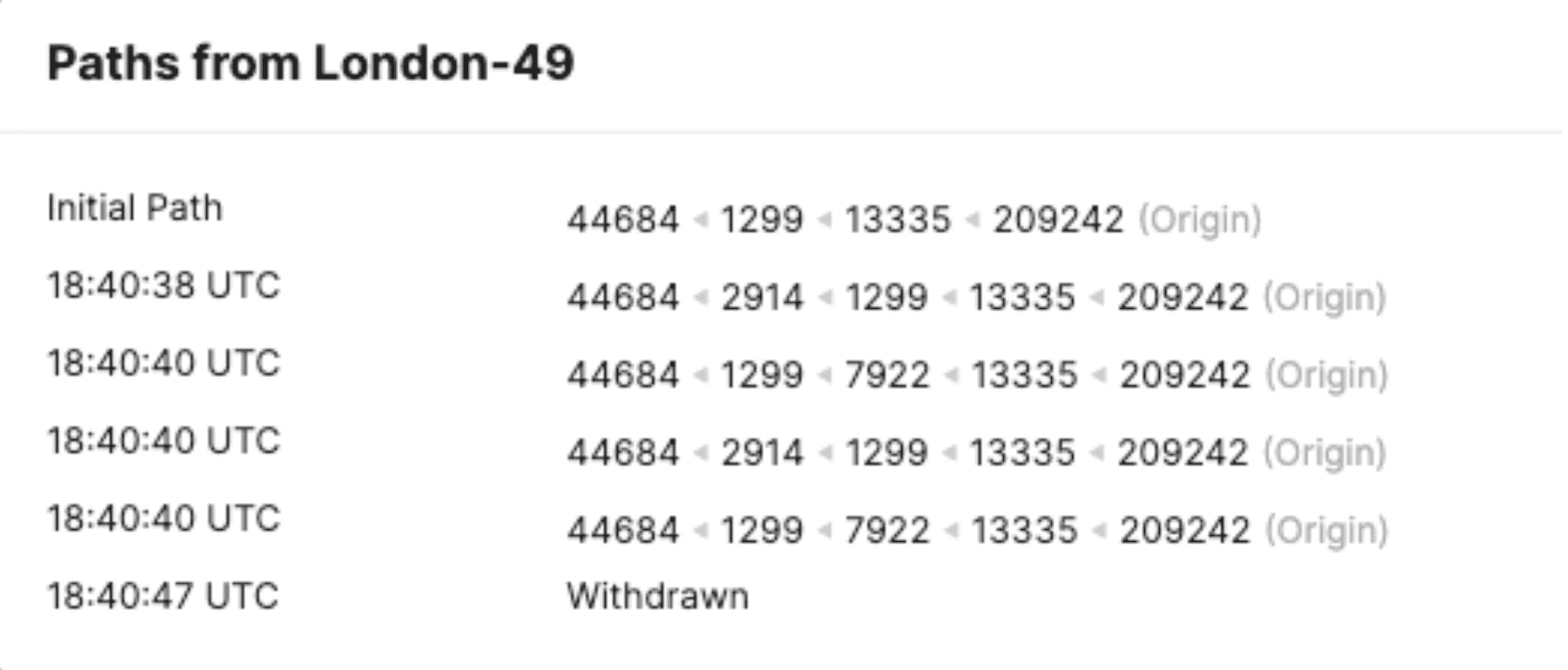

By the time Cloudflare identified what was happening and shut the task down, ThousandEyes observed that approximately 1,100 customer prefix advertisements had been withdrawn from the global routing table. Because these prefixes were exclusively advertised by Cloudflare, the Internet’s routing system had no valid route to those destinations — the prefixes had ceased to exist in the global routing table entirely. This triggered "BGP path hunting," where routers cycle through alternative paths as the routing table becomes unstable, until the prefix is ultimately withdrawn entirely.

The Hidden Dependency

The BYOIP architecture creates an inherent attribution challenge. Because the affected IP space appears from the outside to belong to the customer rather than to Cloudflare, there is no obvious signal pointing to Cloudflare as the source of the problem. End users experiencing failures would likely have attributed them to the service they were trying to reach. The organizations operating those services would have seen their own IP space becoming unreachable and had every reason to look inward first. This is the nature of a hidden dependency, wherein the infrastructure layer responsible for the failure is not visible at the surface where the failure is felt.

There is a further subtlety: even within the affected BYOIP customer base, not all customers were impacted equally. Cloudflare's own account indicates the cleanup task was applied iteratively and was stopped before it had reached all customers, which accounts for the fragmented impact radius. Additionally, where a customer's traffic was distributed across multiple products or providers, the failure may have appeared as a function-level disruption rather than an organization-wide outage, further obscuring the connection to Cloudflare.

Salesforce Service Disruption

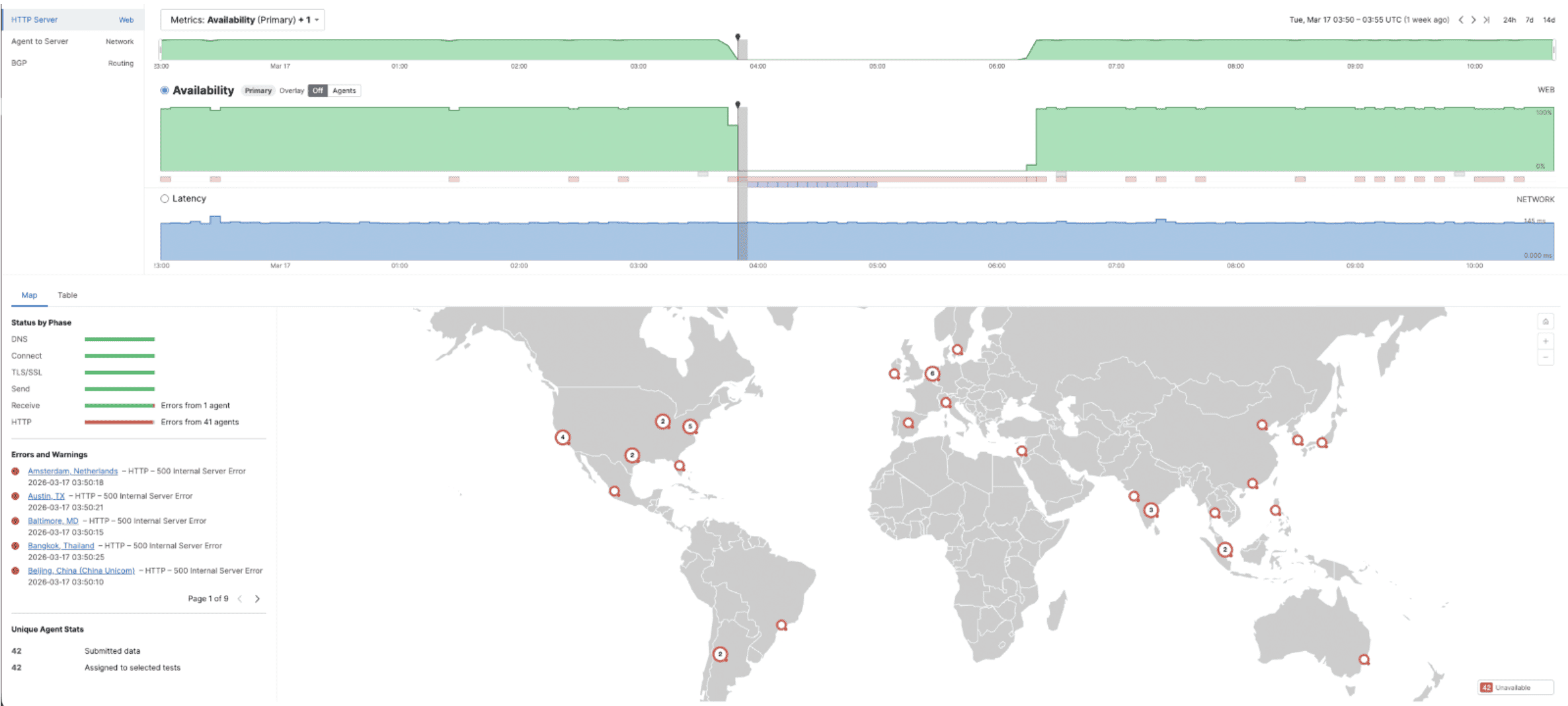

On March 17, Salesforce experienced a global service disruption. Cloud services have outages, and that in itself is not remarkable. What is worth examining is what the monitoring data tells us about how this one unfolded. The failure left a distinct three-stage sequence in the telemetry that, when read carefully, offers some indication of how the disruption progressed.

The Anatomy of a Backend Failure

ThousandEyes observed a sequence that began with HTTP 500 errors. A 500 is a broad server-side error code that simply means something went wrong internally — the server received the request and tried to process it but failed before it could return a normal response. In other words, the backend was still alive and reachable but struggling. This was followed by HTTP 503 errors, consistent with load balancers having stopped forwarding traffic to the backend and concluded with a recovery phase marked by receive-phase timeouts. Across the tests observed, the network paths to Salesforce appeared to remain healthy throughout.

|

Phase |

Error |

What It Tells Us |

|

Act 1 |

HTTP 500 |

Backend reachable but failing to process requests. Application-layer errors. |

|

Act 2 |

HTTP 503 |

Consistent with load balancers having stopped forwarding traffic to the backend. Service fully unavailable. |

|

Act 3 |

Recv Timeout |

Backend accepting connections again but not yet serving. Consistent with warm-up / backlog drain. |

The sequence is telling. Had this been a gradual degradation you would expect a more mixed picture — some requests receiving 500s while others received 503s, geographic variation, a longer transition window. What we observed was the opposite. The 500 phase was brief and the shift to 503 appeared near-simultaneous across the tests observed, more consistent with something failing suddenly at the backend, the 500s representing the brief window where the backend was still running but unable to serve, before something upstream detected that and stopped forwarding traffic entirely.

Decoding the Telemetry

ThousandEyes observed that while some static page elements appeared to be served normally, the script responsible for loading the customised SSO login page was not always being returned. That script, authn-request.jsp, has to be served live from the backend every time. It cannot be cached or served from a content delivery network. Based on what the synthetic tests returned, it is reasonable to infer that users attempting to log in would likely have been presented with only a generic default login page rather than their organisation's customised sign-in page.

The customised page requires a live backend call to retrieve org-specific configuration, and that call was not completing. Because this pattern was consistent across multiple tests and geographies, it points to a centralised shared component rather than a customer-specific or regional issue. The data is consistent with a bottleneck in the database tier underpinning the authentication service.

What These Outages Tell Us

Both incidents illustrate something worth sitting with. In each case, the individual components involved were, in isolation, behaving consistently with their design. It was the combination of conditions, a permissive API default meeting a destructive operation at scale in the Cloudflare case, a degrading backend component meeting the load and dependency profile of a global authentication service in the Salesforce case, that turned a contained problem into a widespread outage. Neither vendor had full visibility into that combination from inside their own systems, which is precisely why the external signal was readable before the internal picture was clear.

Two things are worth taking away from both incidents:

-

Understand Your Dependencies: Understanding how your critical services are connected at the infrastructure level, not just at the application level, is what determines how quickly you can identify which combination of conditions is in play when something goes wrong.

-

Automation Requires Guardrails: Automated processes operating at scale need safeguards proportionate to their blast radius. Both incidents involved processes behaving as designed, right up until the point where the consequences became uncontainable. The question worth asking after any incident like this is not just what broke, but what assumptions were baked into the system that allowed it to get that far.

Outages like these are not exceptional events. They are an inevitable feature of operating at scale on complex interconnected infrastructure. The organizations that navigate them best are not necessarily those with the fewest failures, but those that can answer the right questions quickly when failures occur.

By the Numbers

Let’s close by taking our usual look at some of the global trends that ThousandEyes observed across ISPs, cloud service provider networks, collaboration app networks, and edge networks over recent weeks (January 26–March 22).

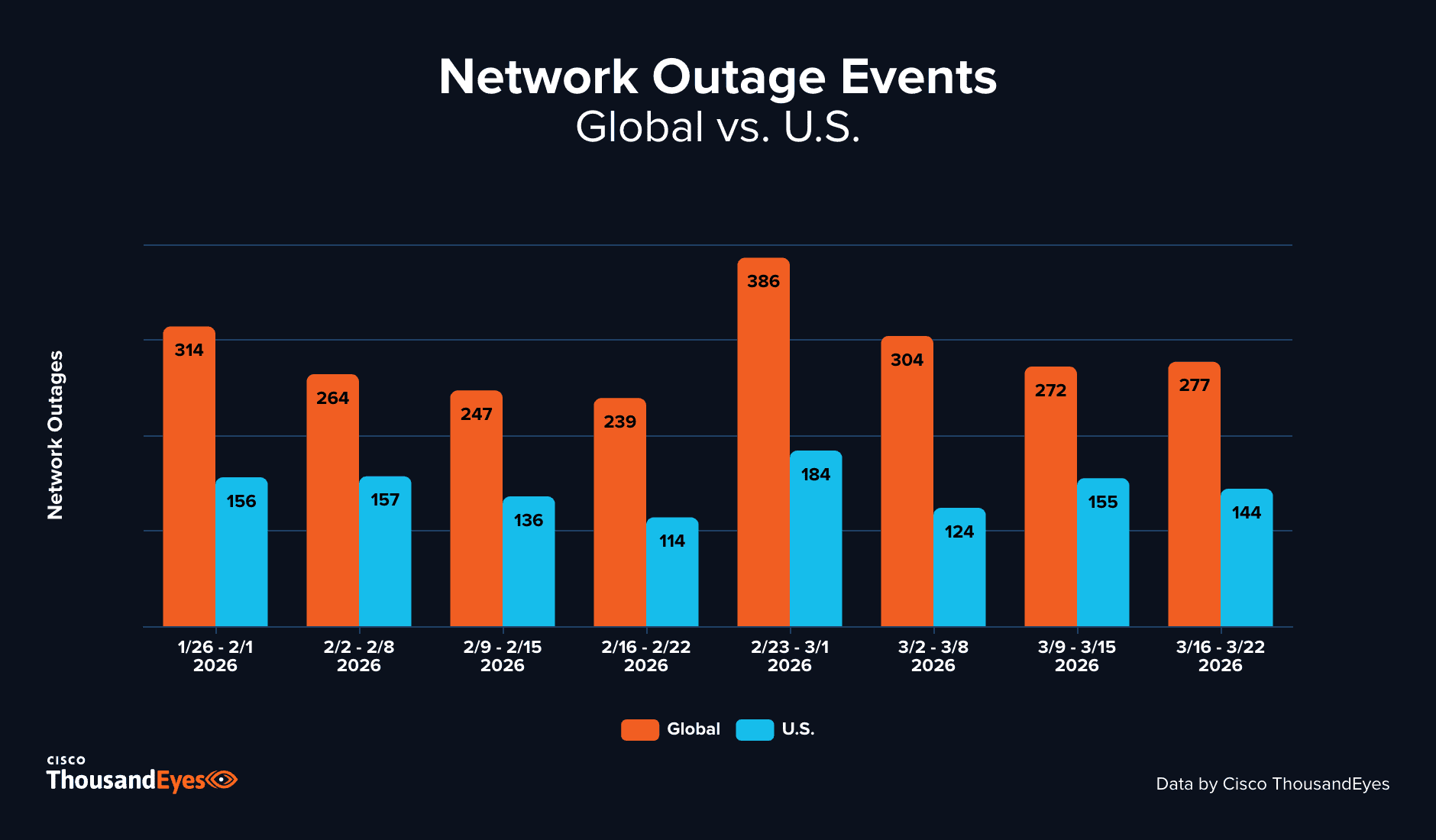

Global Outages

-

From January 26–February 1, ThousandEyes observed 314 global outages, continuing the recovery in network activity that began in mid-January following the holiday period. During the week of February 2–8, global outages decreased 16%, falling to 264.

-

During the week of February 9–15, global outages declined a further 6%, falling to 247. This continued a gradual downward trend that persisted into the following week, with outages edging slightly lower to 239 during February 16–22—the lowest weekly total in the observed period.

-

The week of February 23–March 1 saw a sharp reversal, with global outages surging 62% to 386—the highest weekly total in the observed period. During the week of March 2–8, global outages declined 21% to 304, suggesting the spike was transient rather than the start of a sustained elevated period.

-

During the week of March 9–15, global outages declined a further 11% to 272. The following week of March 16–22 saw outages remain essentially stable, rising marginally to 277.

United States Outages

-

The United States saw 156 outages during the week of January 26–February 1, with activity remaining virtually unchanged the following week at 157 during February 2–8—suggesting a period of relative stability in U.S. network operations.

-

During the week of February 9–15, U.S. outages decreased 13%, falling to 136. This decline deepened during February 16–22, with outages falling a further 16% to 114—the lowest U.S. weekly total in the observed period.

-

Mirroring the global trend, the week of February 23–March 1 brought a sharp spike in U.S. activity, with outages rising 61% to 184. The following week of March 2–8 saw a significant correction, with U.S. outages falling 33% to 124.

-

During the week of March 9–15, U.S. outages increased 25% to 155 before declining 7% to 144 during March 16–22, returning to levels broadly consistent with the earlier part of the observed period.

-

The brief but pronounced spike during the week of February 23–March 1 was notable for affecting both global and U.S. networks in near-equal proportion. Across the full period, the United States accounted for approximately 50% of all observed network outages.

Month-over-month Trends

-

Global network outages decreased marginally from December to January, falling less than 1% from 1,170 incidents to 1,160. This was followed by a further slight decrease in February, with global outages declining an additional 2% to 1,142.

-

The United States showed a modest 4% increase from December to January, with outages rising from 587 to 608, before declining slightly to 600 in February—a 1% decrease month-over-month.

-

This pattern is notably different from what we have previously observed. In prior years, January and February have typically seen elevated outage activity as network operators address maintenance work deferred from the holiday period. Whether the relative stability seen across December, January, and February 2025–26 reflects a broader shift in operational patterns, or simply an anomalous period, remains unclear. Data across all three months has tracked more evenly than we would typically expect at this time of year.