This is The Internet Report, where we analyze outages and trends across the Internet through the lens of Cisco ThousandEyes Internet and Cloud Intelligence. I’ll be here every other week, sharing the latest outage numbers and highlighting a few interesting outages. As always, you can read the full analysis below or listen to the podcast for firsthand commentary.

Internet Outages & Trends

On April 2, 2026, Microsoft 365 experienced a global service disruption that left users across every major region unable to access essential services for a little over an hour. While the incident was eventually resolved by rerouting traffic, the event serves as a critical case study in the architecture of modern cloud services. It highlights a recurring reality: the impact radius of a failure is defined not by where the faulty component is located, but by what depends on it.

In this episode of The Internet Report, we break down the technical failure signatures, clarify the difference between mitigation and resolution, and explore why understanding your critical path is the ultimate insurance policy for your business.

The Anatomy of the April 2 Microsoft Outage

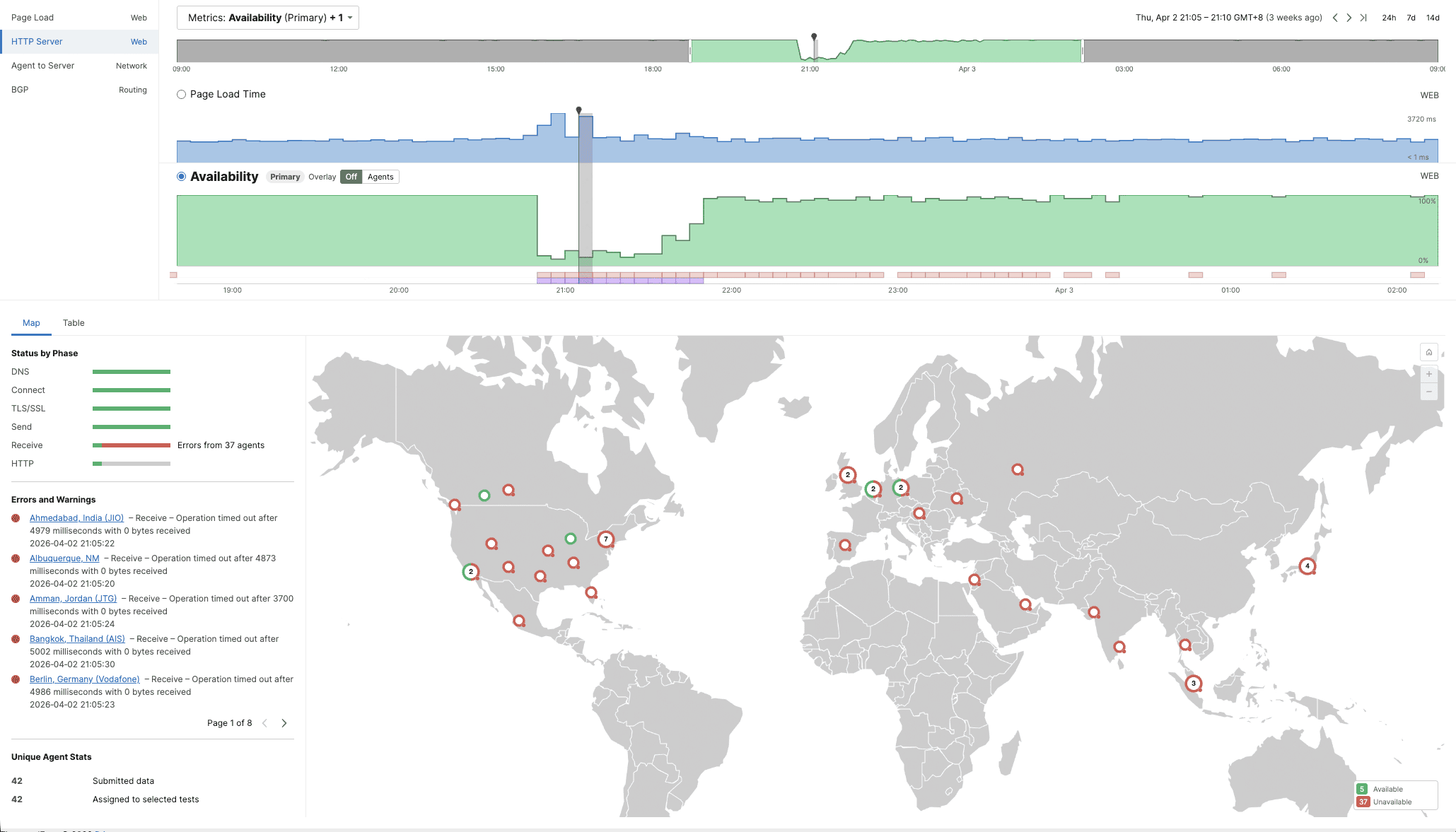

The first signs of trouble appeared at around 20:40 UTC (4:40 PM EDT). This was not a clean, instantaneous break. There were early indicators of degradation before the main event, response times creeping upward in a handful of locations before things got significantly worse.

At approximately 20:50 UTC, availability dropped sharply. Within minutes, monitored locations across multiple regions were showing near-complete failure to reach Office.com. The transition from degraded to effectively unreachable was fast.

Partial service restoration was observed at around 21:54 UTC, though response times remained elevated for some locations beyond that point. The incident was not fully closed until 01:55 UTC on April 3. Recovery appeared gradual rather than instantaneous — the kind of pattern you see when traffic is being rebalanced across infrastructure rather than when a single switch is flipped back on.

Microsoft’s explanation for what caused the failure was that a subset of infrastructure in their Central US data center had entered an unexpected, degraded state, disrupting the handling of requests. Their resolution was to reroute traffic away from the affected infrastructure and onto healthy infrastructure elsewhere.

Where the Failure Was—And Where It Was Felt

Microsoft’s incident communications described the impact as “largely localized to the Central US region.” This is a reasonable description of where the problem was. It is a less complete description of who was affected by it.

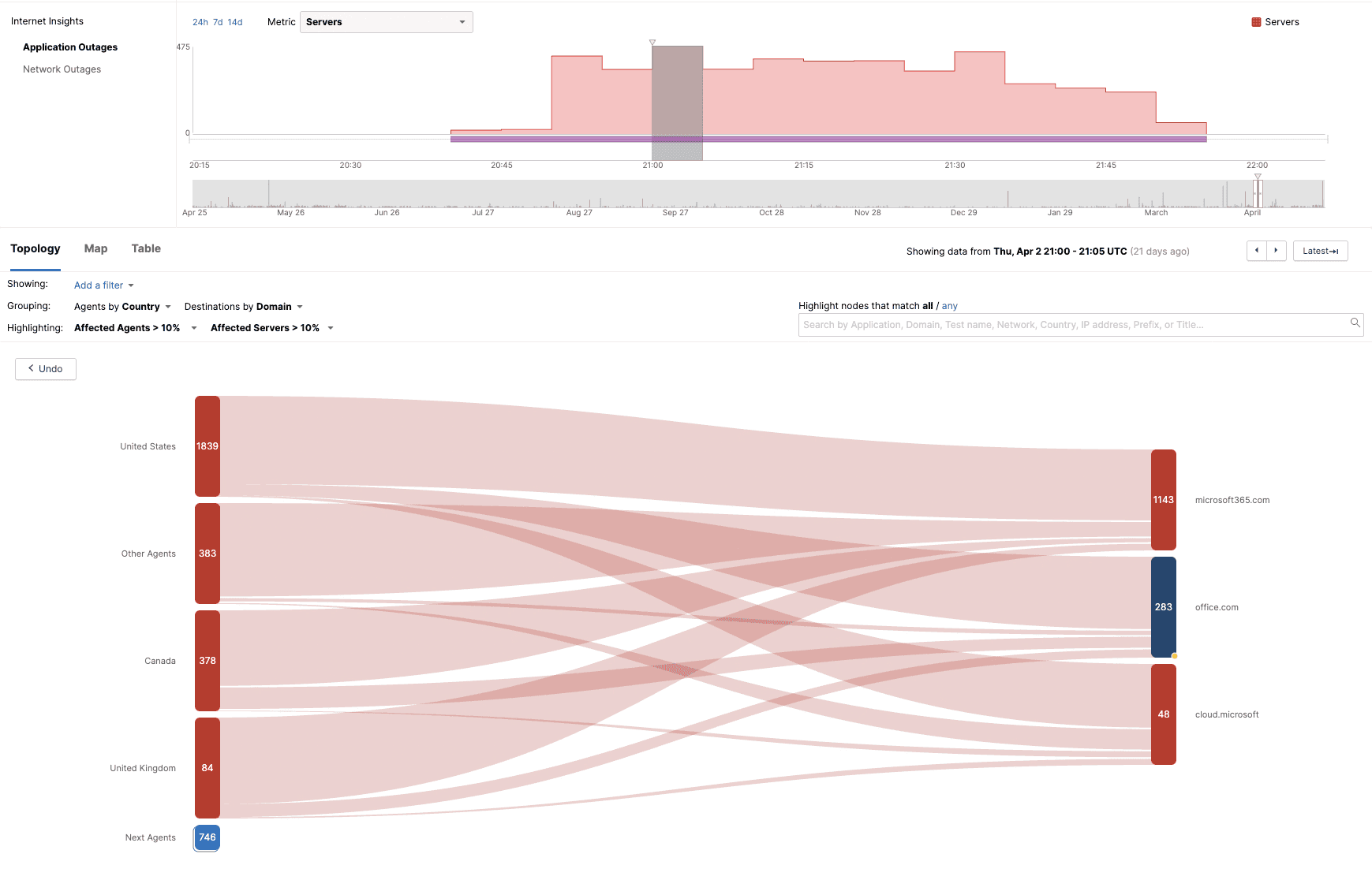

What was observed externally told a broader story. Simultaneous failures appeared across monitored locations in Edinburgh, London, Frankfurt, Berlin, Tokyo, Singapore, Mumbai, Manila, Amman, and Doha — alongside the Americas. These locations span every major region. The impact was global in practice, even if the cause was geographically specific.

These two things are not a contradiction. They are a distinction that is worth understanding, because it comes up repeatedly with large cloud services and it affects how you think about your own exposure when incidents like this one occur.

The Central US data center contained the degraded component. But that component was not just serving requests from people and systems in Central US. It was serving requests from users and dependent services everywhere. When it degraded, everything that depended on it degraded with it, regardless of where the originating request came from.

This is the architecture of modern cloud services. The infrastructure that processes requests is often centralized or shared across a global user base in ways that are not visible from the outside. The edge—the part of the service your connection first reaches—may be distributed and locally healthy. What sits behind the edge may not be. When a shared backend component fails, the blast radius is defined not by where the component is, but by what depends on it.

Reading the Failure Signature

When a connection to a web service is made, it moves through a sequence of steps: DNS resolves the address, a TCP connection is established, a TLS handshake secures it, authentication passes, and then data is exchanged; a request goes out, a response comes back. Each of these phases can succeed or fail independently, and the pattern of which ones succeed and which ones fail tells you a great deal about where a problem actually is.

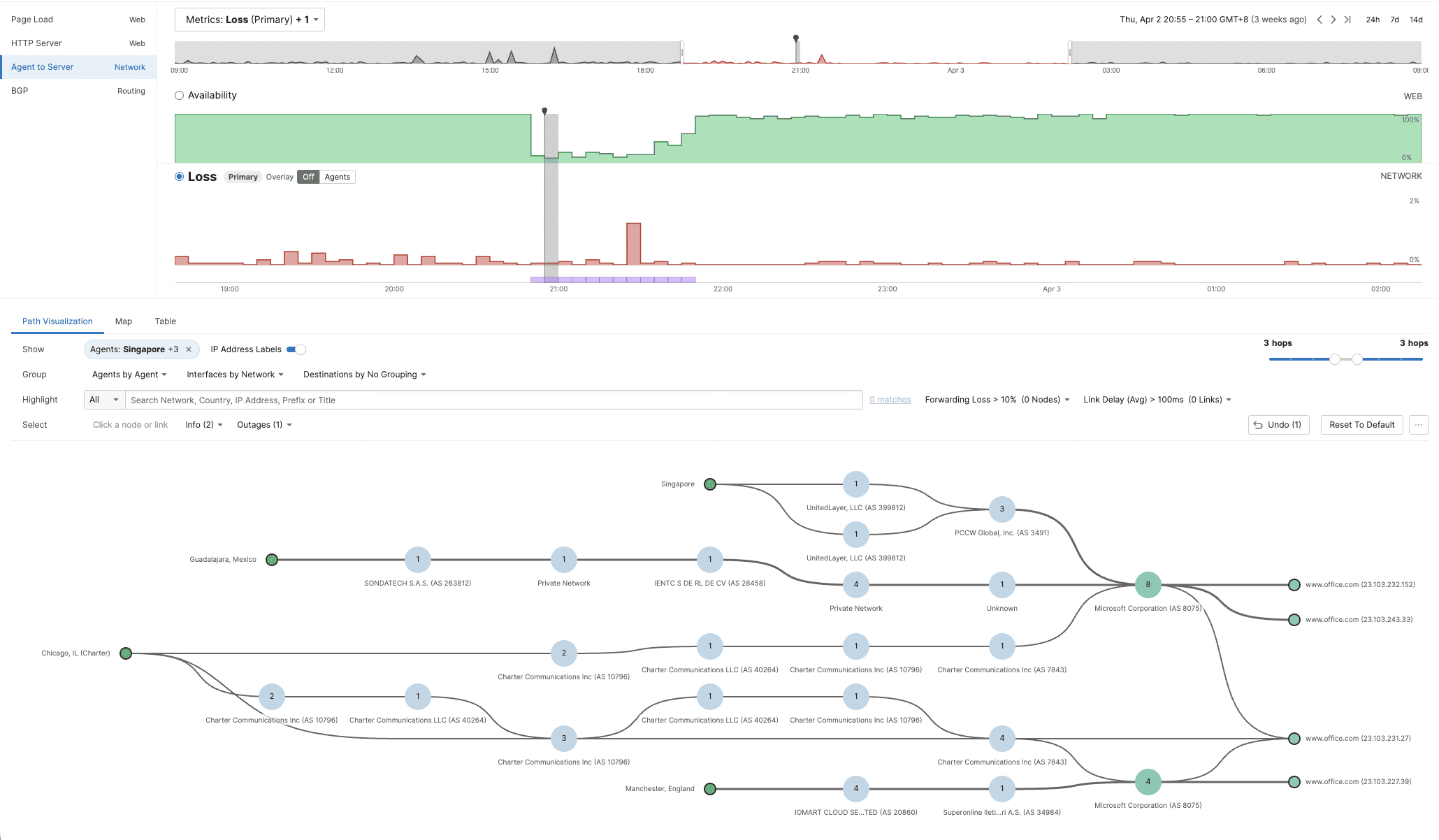

During this outage, DNS worked normally. TCP connections established successfully. TLS handshakes completed. Authentication passed. The failure was at the very end of that chain, the receive phase. Connections were established and then met with silence. The server accepted the connection and never returned a response.

This is an informative failure signature. It places the problem not in the network, DNS infrastructure, nor in the edge but in the application backend—the part of the service that actually processes requests and generates responses. The connection machinery worked. The request processing did not.

The network layer during this period showed some minor anomalies in isolated locations; slightly elevated latency, a small amount of packet loss here and there. These are worth noting, but they are also worth contextualizing. Locations with perfectly clean network conditions experienced complete application failure. Some locations with elevated network metrics still received responses. The network anomalies and the application failures did not correlate. The network was background noise in this incident, not a contributing factor.

What the pattern does point to is something sitting deeper in the service architecture. A shared component that all those geographically dispersed requests had to pass through was no longer responding. When a component like that degrades, the failure appears simultaneously across locations that may have nothing in common except their dependence on that shared piece of infrastructure.

Mitigation vs. Resolution

Microsoft restored service by rerouting traffic. Specifically, their incident update sequence shows that targeted restarts of the degraded infrastructure did not resolve the problem, so the decision was made to redirect traffic to healthy infrastructure elsewhere. This worked, as service came back up and remained stable in the days that followed.

It is worth being clear about what this approach is. Rerouting is a mitigation: it removes the impact by routing around the problem rather than by fixing it. The degraded infrastructure in Central US was not repaired during the incident window; requests were instead sent somewhere else. This is a legitimate and widely used approach to incident response. In many situations it is the right call; getting the service back up is the priority, and a complex infrastructure failure may take hours or days to fully diagnose and repair. Rerouting achieves restoration far faster.

The distinction matters for a practical reason. When a provider reroutes traffic rather than fixing the underlying cause, the story is not fully complete at the point of restoration. The underlying issue remains to be addressed separately, the infrastructure that absorbed the rerouted traffic is now carrying additional load. Depending on how the original infrastructure is eventually remediated, there is at least a possibility of further disruption during that process.

In this case the outcome was straightforward. The mitigation held, the service remained stable, and there were no signs of re-emergence. Yet the key takeaway is important: When you read an incident update that says “we rerouted traffic” and the service has been restored, yes, that is meaningful but understand that it does not necessarily mean the underlying problem has been fixed.

The Artemis II Hook: What is in Your Critical Path?

This was all happening in the day after the launch of Artemis II. News outlets picked up on reports of an issue with a Microsoft Outlook email client on a crew member’s personal laptop, and some stories drew a connection between this and the Microsoft 365 outage.

The connection does not hold up to examination, and the flight director confirmed as much in the post-launch press conference: it was a known configuration issue related to offline synchronization, resolved by the ground team reloading the relevant files. It was not a launch hold condition.

This is because Outlook on a personal crew device is a commercial off-the-shelf application used for personal scheduling and communications. It is not part of the command-and-control chain for Artemis II. None of its reliability requirements, connectivity dependencies, or recovery behaviors are compatible with what sits inside a critical mission workflow. The email client being unavailable was an inconvenience. It was not consequential to the mission.

The Artemis II Outlook story is a reasonable hook. The more interesting question it opens up is: How do you actually know what is and is not in your critical path?

In a launch operations context, the answer to that question has to be precise. Every component in every formal communications chain, and every system on which any part of the go/no-go sequence depends has to be explicitly defined and continuously validated. If something in that chain fails, even something that looks peripheral, the right signal has to propagate to the right person at the right time not because of what the component is, but because of where it sits in the chain.

Understanding which components sit inside critical workflows and continuously validating that those components are doing what they are supposed to do end to end is not unique to spaceflight. This discipline applies to any environment where the cost of finding out a component has failed at the wrong moment is high.

And this is where the Microsoft 365 outage becomes relevant again, at a rather more everyday scale. The edge infrastructure that users connect to was functioning correctly throughout the incident. The network paths were clean. What failed was a shared backend component sitting deeper in the architecture, behind the visible surface of the service.

For any organization whose workflows depend on Microsoft 365, it’s important to ask not just whether the service was down, but which specific workflows were affected and whether that was known before or after they stopped working.

These are different questions. The first is answered by watching a status page. The second requires knowing, in advance, what your service delivery chains actually depend on.

Lessons for NetOps

A few things are worth keeping from this outage.

The first is that the location of a failure and the scope of its impact are often two different things, and understanding why that is tells you something useful about how the services you depend on are built. Modern cloud services are layered, and the layers that are visible to you are often not the layers where problems originate.

The second is that reading a failure pattern—understanding what succeeded and what failed, and in what sequence—is more useful than simply knowing that something is down. A failure at the receive phase, with everything else working, is telling you something specific about where the problem is. That specificity matters when you are trying to understand your exposure and make decisions under uncertainty.

The third is that knowing whether you are looking at restoration or remediation changes how you think about what comes next. Neither of these is a criticism of how Microsoft handled this outage. Rerouting worked, and service has remained stable. They are simply useful distinctions to carry when reading incident communications from any provider.

And the fourth, perhaps the most durable, is the question the Artemis II story opens up without quite asking it: Do you know what is actually in your critical path? Not in principle, but specifically which components, which dependencies, which shared services? And are you finding that out when it matters, or afterwards?

By the Numbers

Let's close by taking our usual look at some of the global trends that ThousandEyes observed across ISPs, cloud service provider networks, collaboration app networks, and edge networks over recent weeks (March 23–April 5), along with a look back at March as a complete month.

Global Outages

-

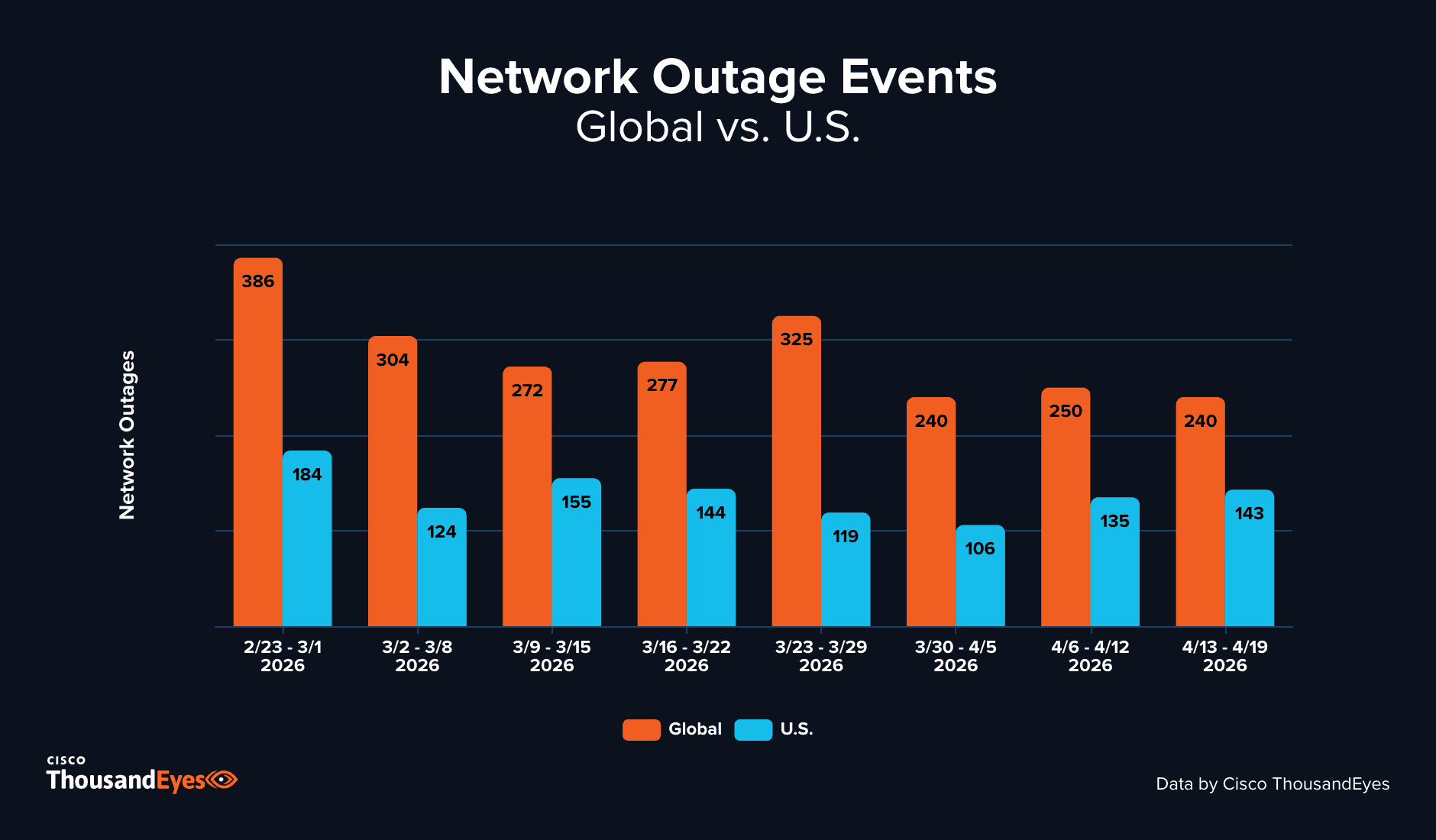

The week of March 23–29 saw global outages rise 17% to 325, reversing the relative stability observed across the two prior weeks.

-

During the week of March 30–April 5, global outages declined sharply, falling 26% to 240—broadly consistent with the levels seen in the earlier part of the observed period and suggesting the mid-to-late March elevation was transient rather than the beginning of a sustained trend.

United States Outages

-

U.S. outages followed a different trajectory during this period. The week of March 23–29 saw U.S. outages decline 17% to 119, even as global outages were rising.

-

During the week of March 30–April 5, U.S. outages fell a further 11% to 106.

Month-over-Month Trends

-

Global network outages increased 13% from February to March, rising from 1,142 incidents to 1,290—the most pronounced month-over-month increase seen since the start of 2026.

-

The United States, by contrast, saw outages remain essentially flat month-over-month, declining marginally from 600 in February to 598 in March—a decrease of less than 1%.

-

The global rise from February to March is consistent with a pattern previously seen in 2025, 2024, and 2022, when March similarly saw an increase in global outage activity relative to February. The one exception in the dataset was 2023, when this pattern reversed. On the U.S. side, the marginal decline from February to March aligns with what was observed in 2024, 2023, and 2022, though it diverges from 2025, when U.S. outages rose over the same period.

About Cisco ThousandEyes

Cisco ThousandEyes provides internet and cloud intelligence that delivers visibility into the digital delivery of applications and services over the internet and cloud.